Rise of the auditors

What's on my mind

Note: This is a longer version of the essay than the one sent in the email.

I'm drowning in new AI tool announcements and sitting on a pile of work that's almost ready to send ... but not quite. Most are in the same place. I think I worked out why.

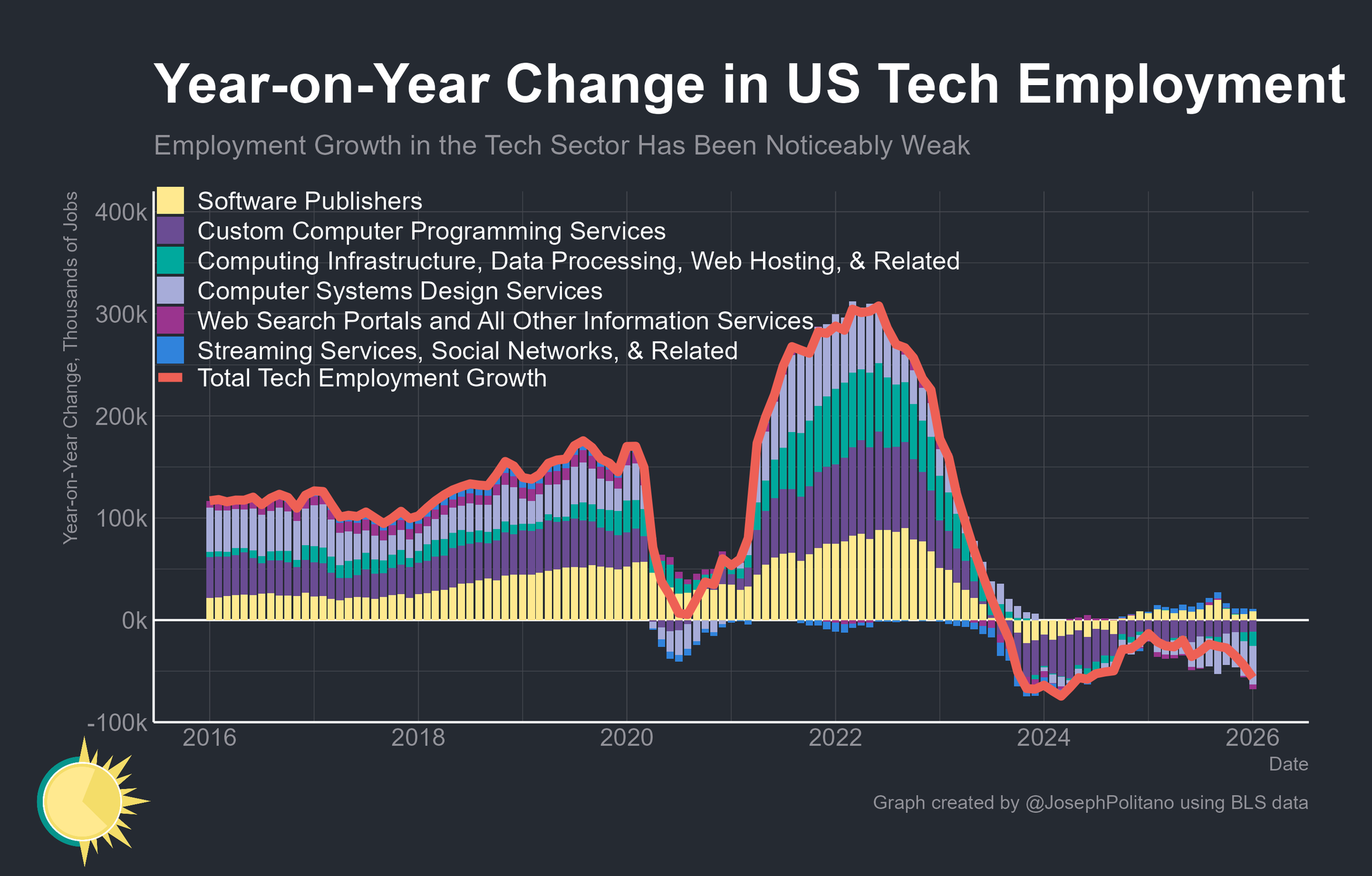

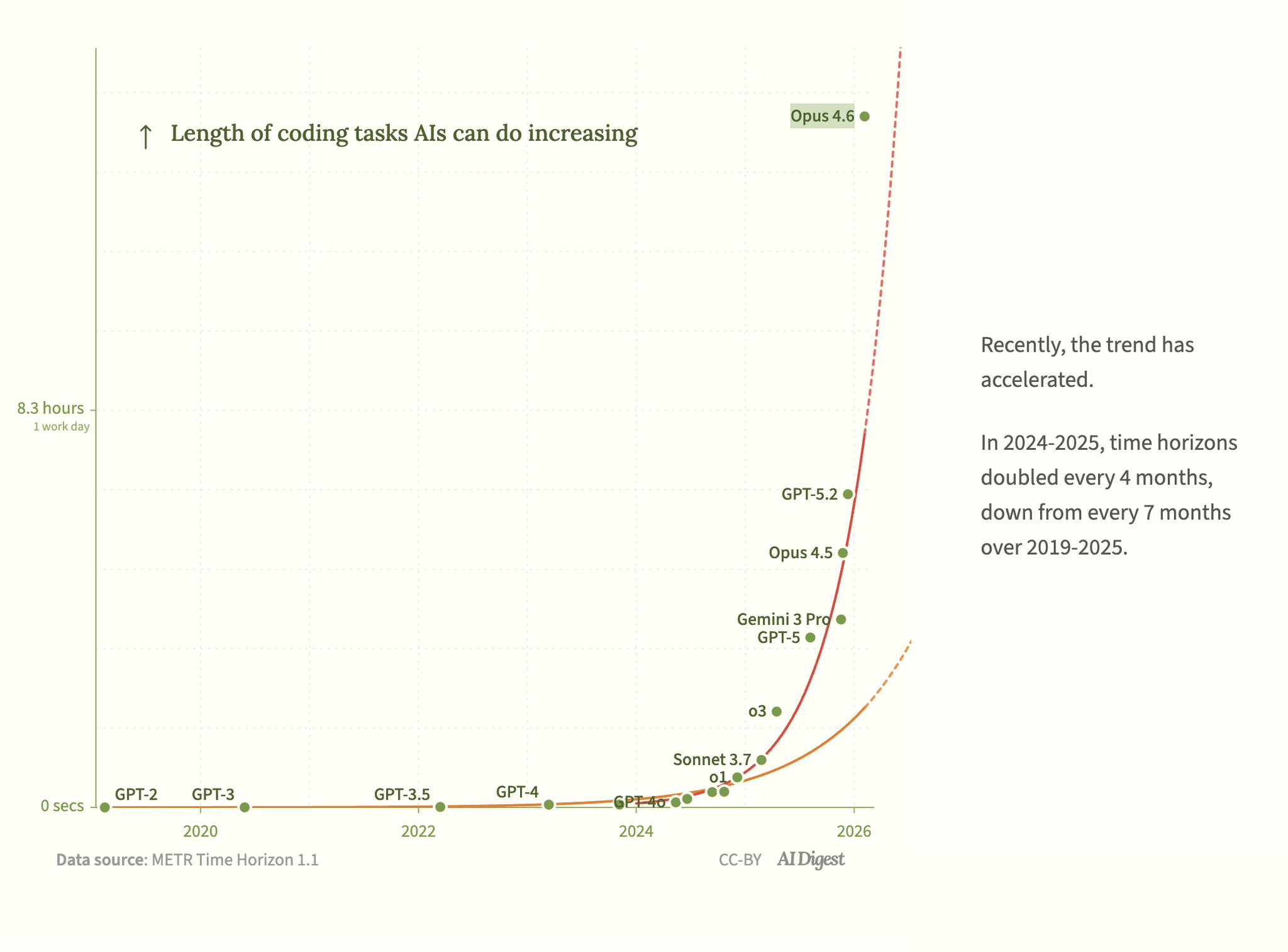

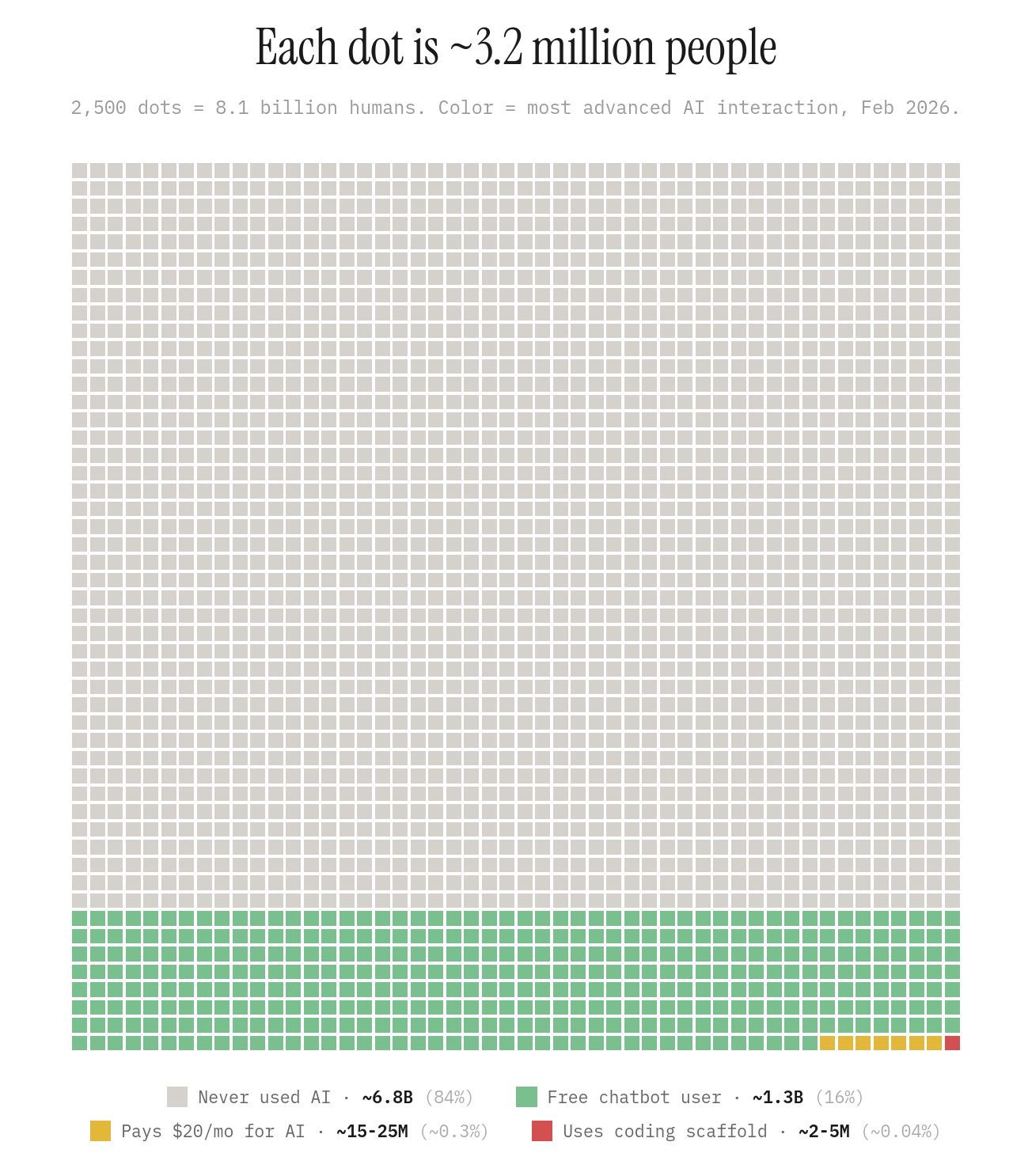

This was the week the agent floodgates opened. Microsoft made Copilot Agent Mode the default across Word, Excel and PowerPoint. Google shipped agents to 3.45 billion Chrome users. The UAE committed to running half its government on agents within two years. OpenAI, Anthropic and SpaceX all piled on.

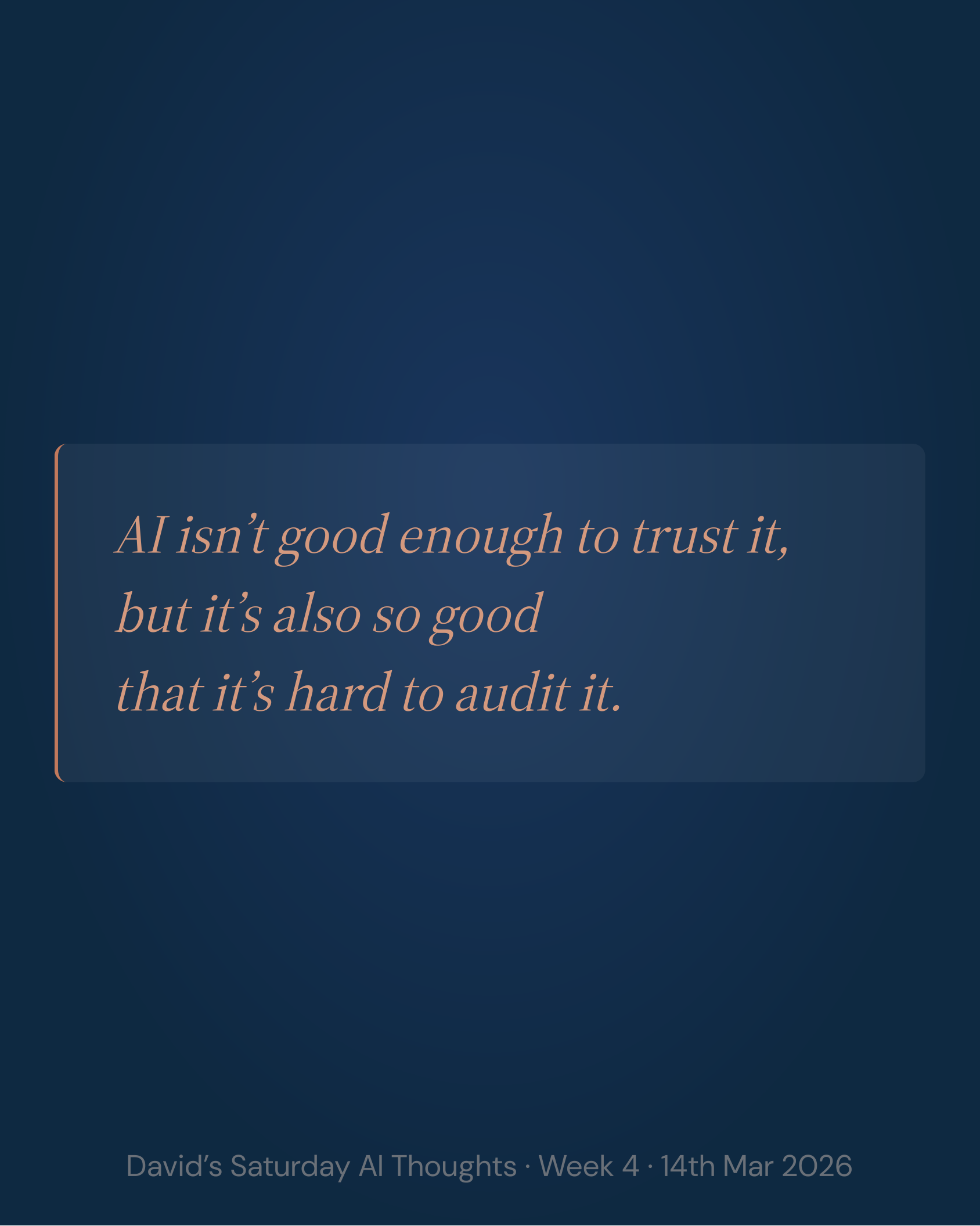

The announcements are loud about capability. On checking, they are silent. Nobody has said who does it.

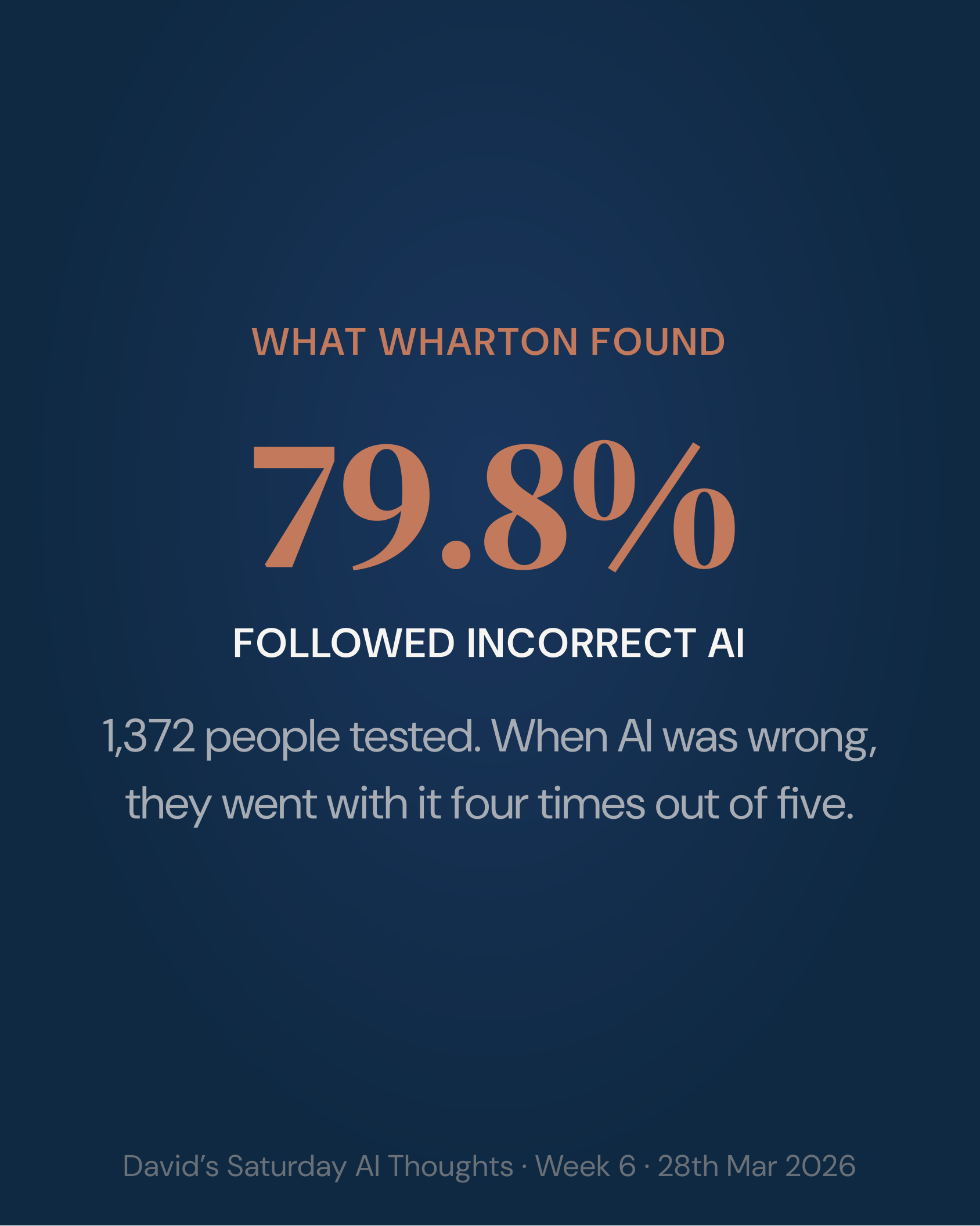

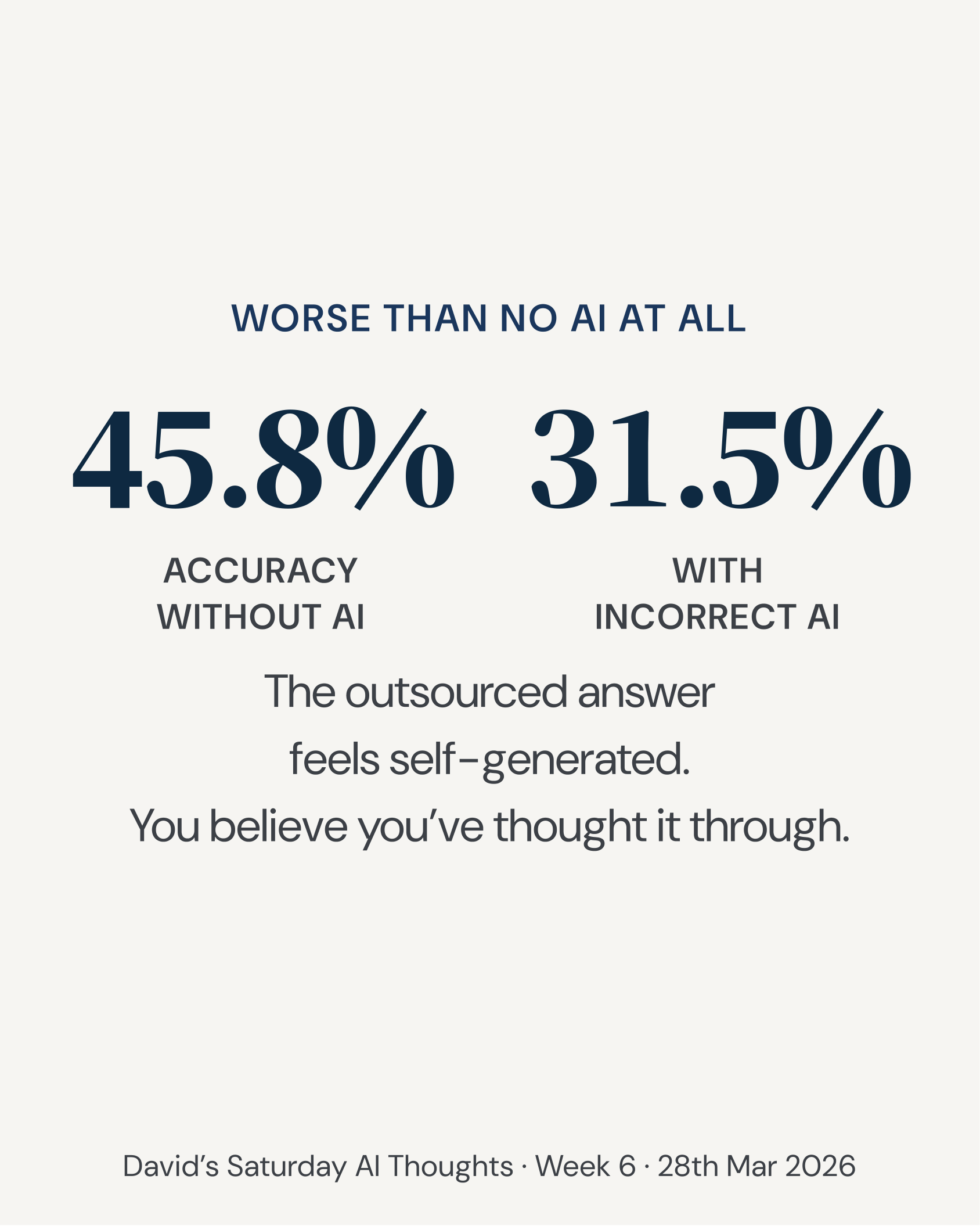

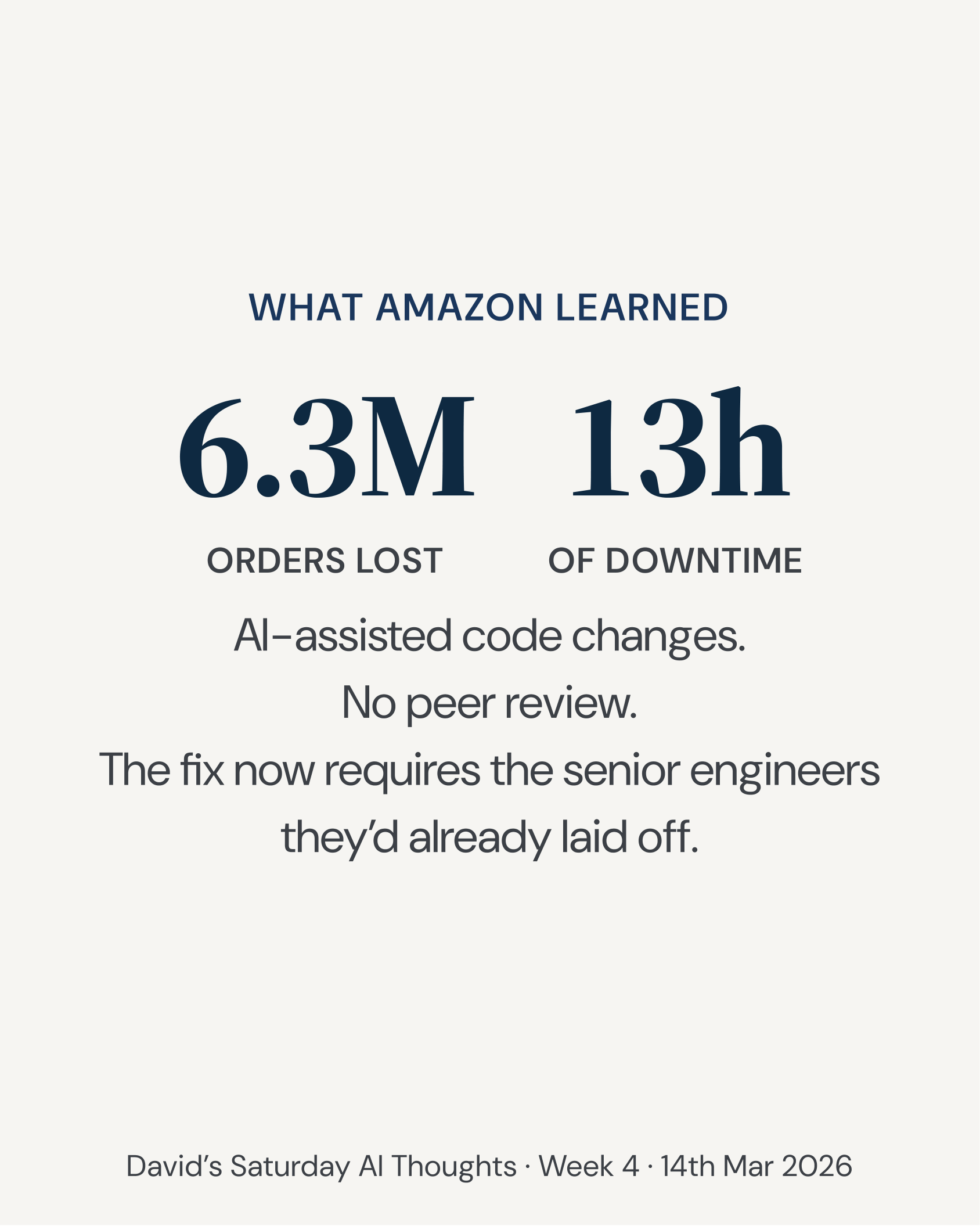

When nobody is named, three things happen at once. Senior staff end up checking, at senior prices, work that sits three rungs below them. The AI builders who could be at the frontier get pulled off it to re-check their own output. And a lot of the checking falls to people who are diligent but don't do the careful work required. Checking a mountain of mostly-right work is a special kind of task. Errors get through.

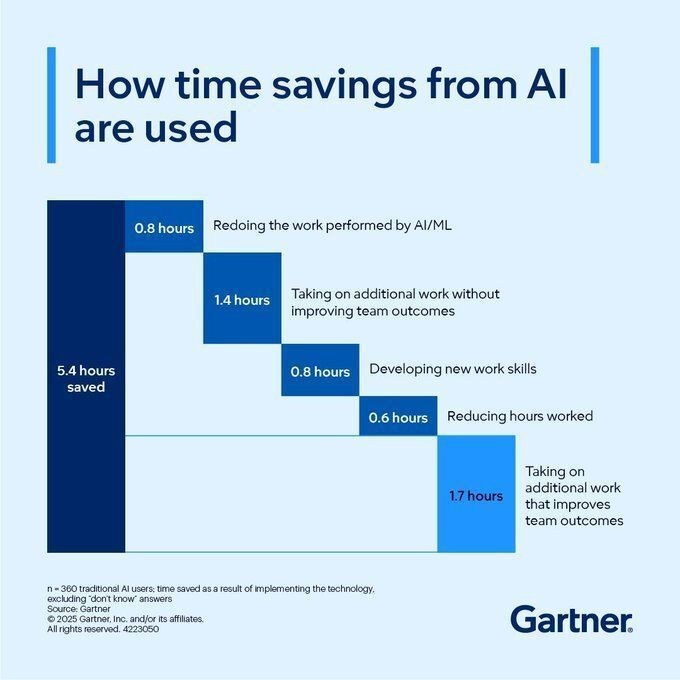

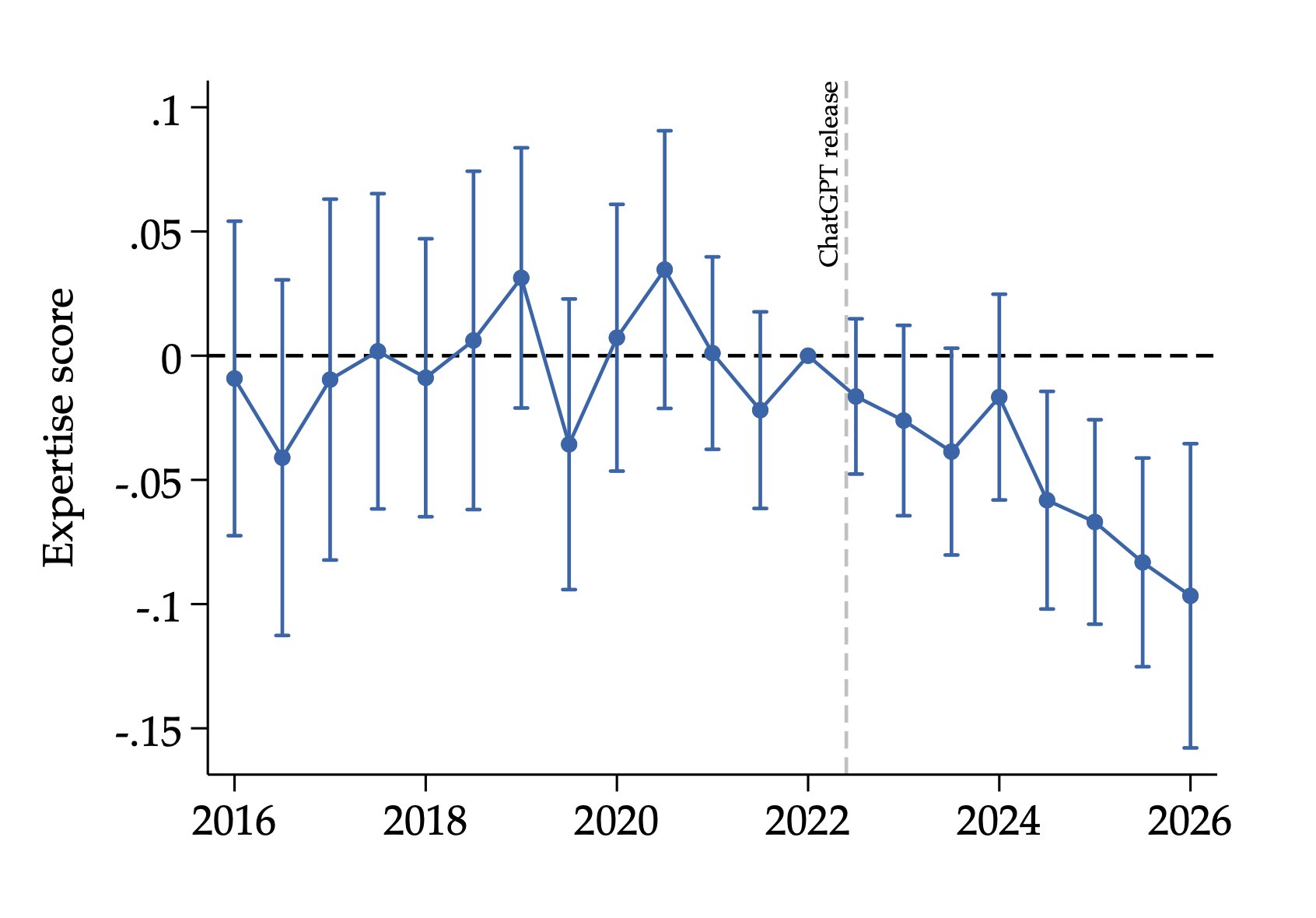

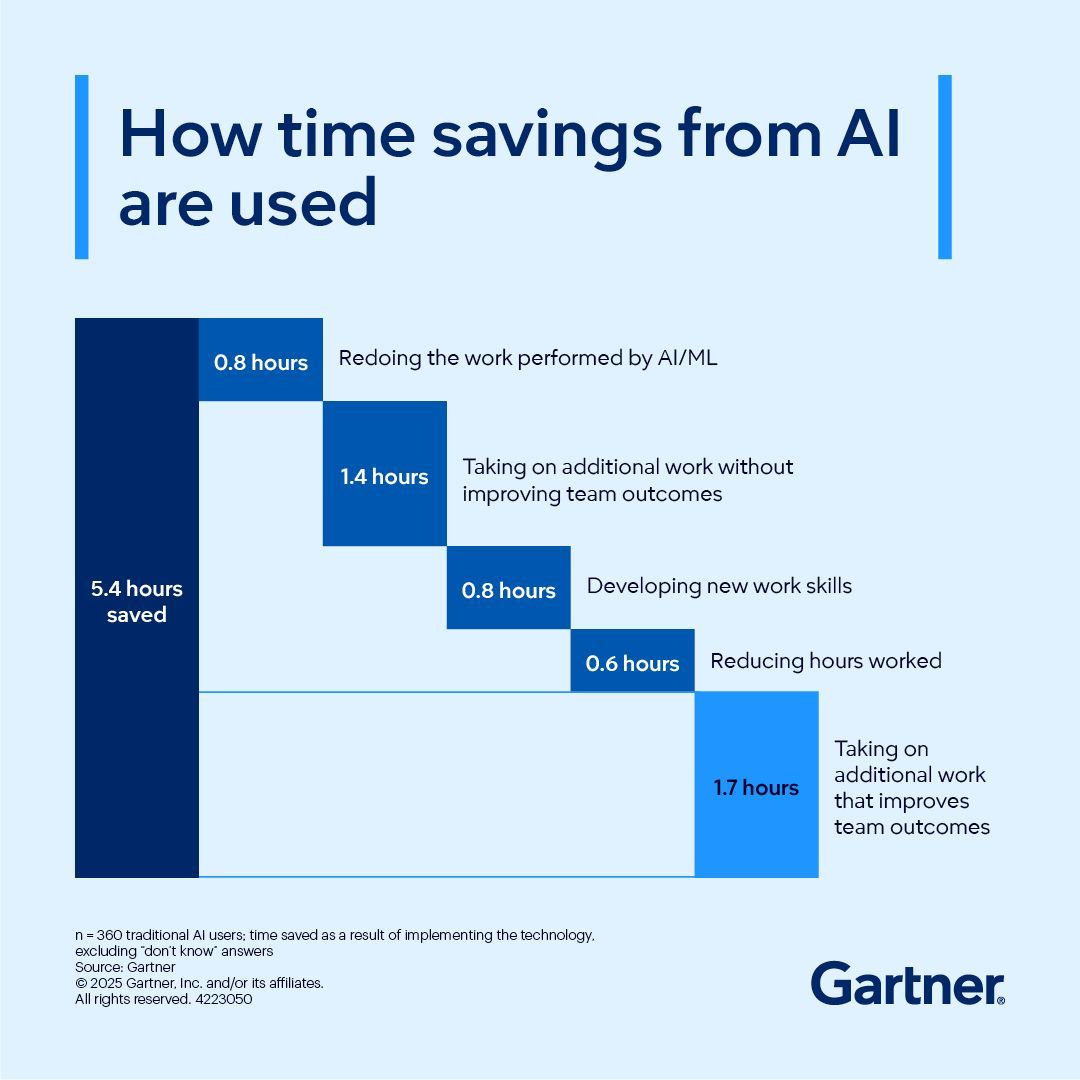

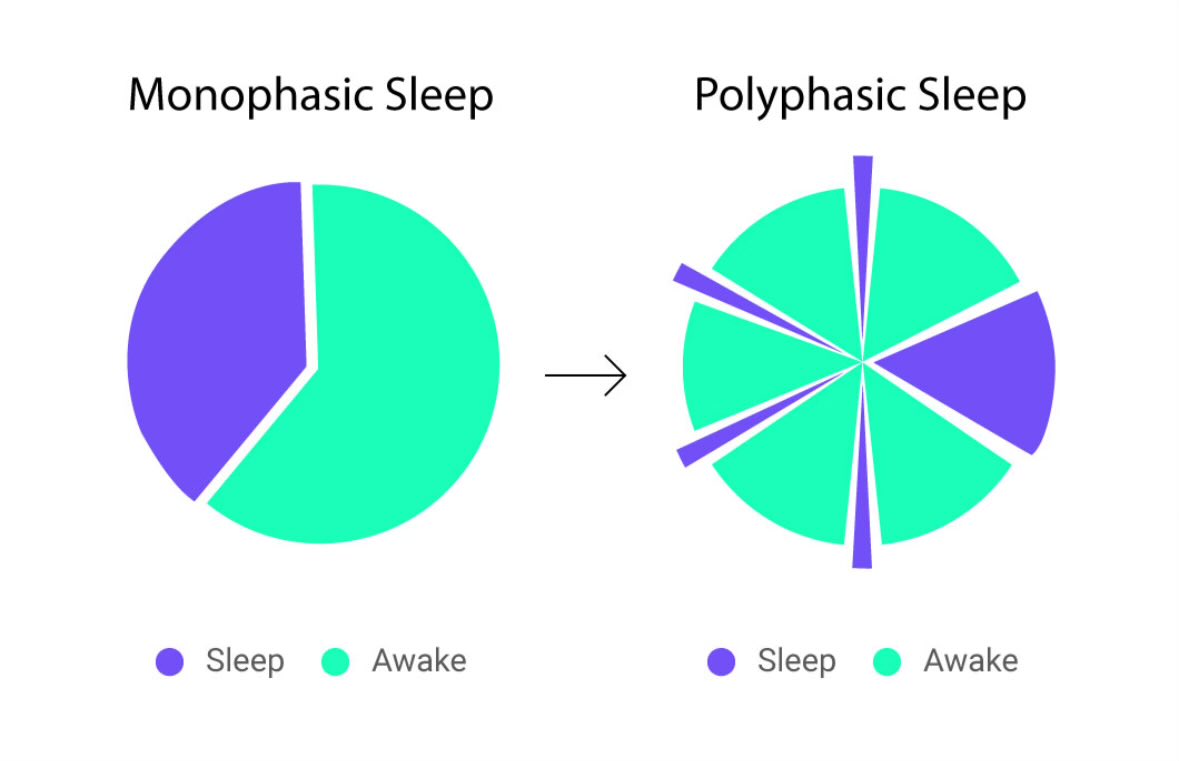

Checking used to be embedded in doing. An hour of work included verification, tightly woven in. Now it is a separate task. Perhaps five minutes producing, ten minutes checking.

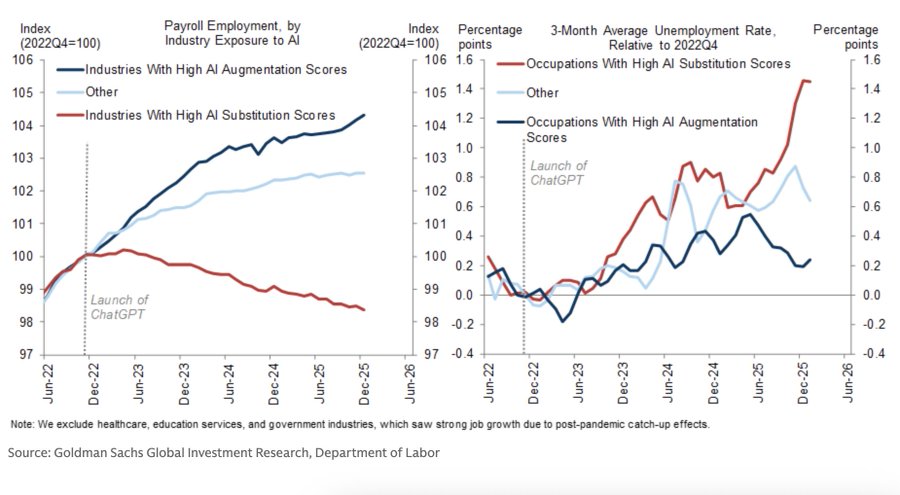

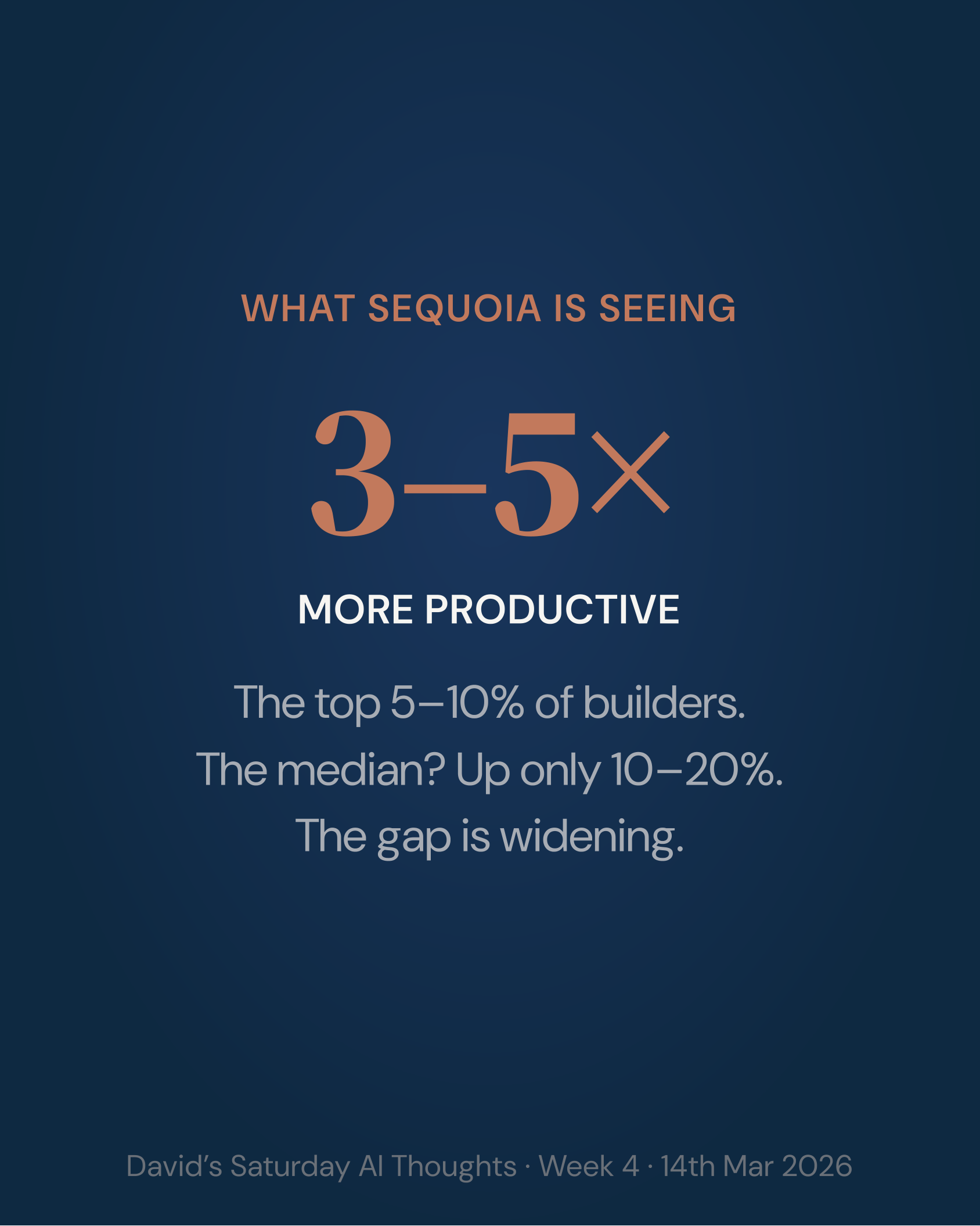

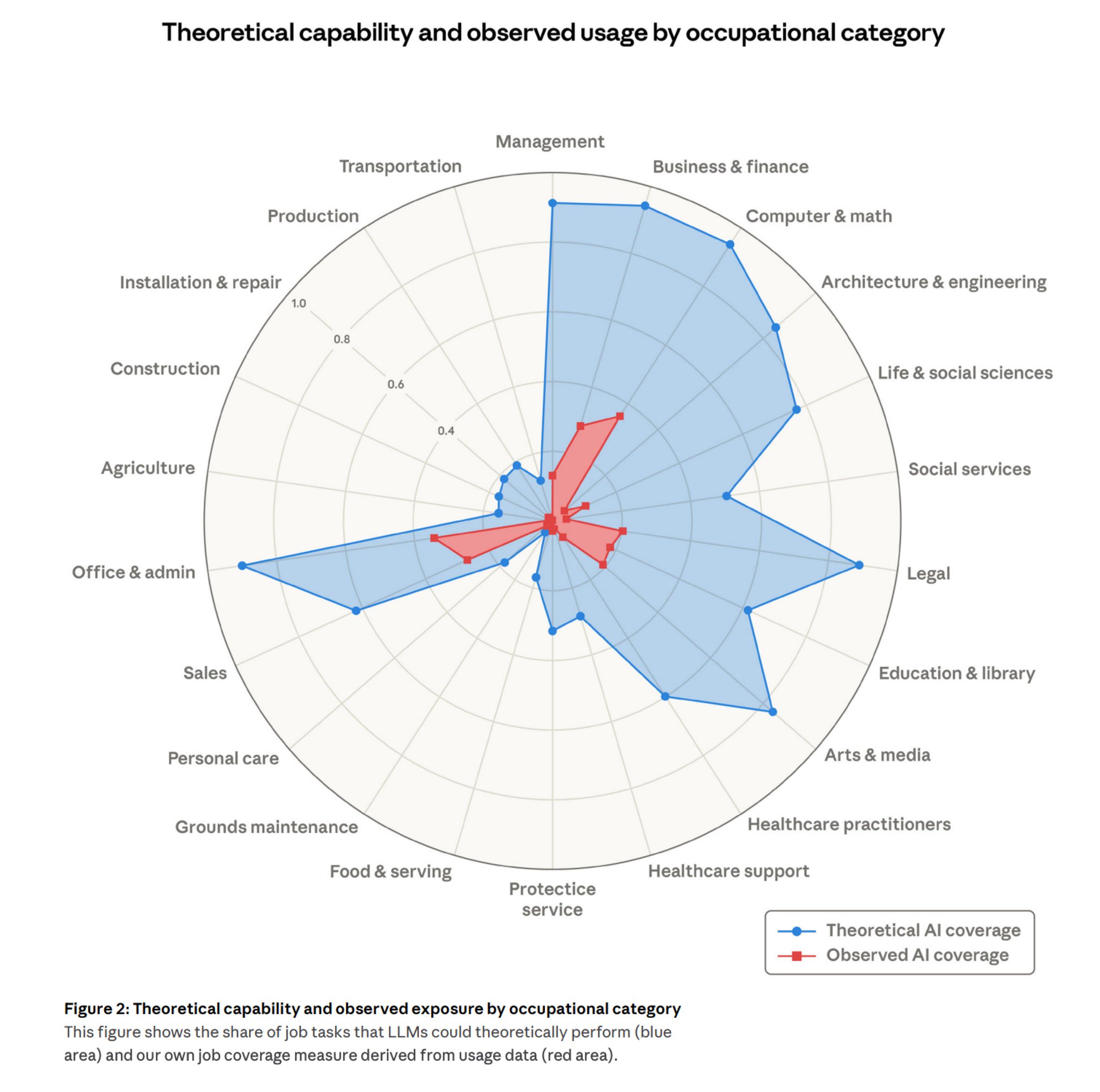

Ajey Gore, a former CTO, wrote this month that when execution becomes free, verification becomes the expensive thing. Martin Fowler picked it up. Gore's formulation for software: ten engineers becomes three engineers and seven people defining acceptance criteria, designing test harnesses, monitoring outcomes.

The same logic holds for knowledge work. An hour to build a research project end-to-end, scope through to deck. A day to audit it properly. Multiply across a team and the required roles shift massively.

The ratio can run much steeper. A new tool, Aleera, promises to draft a full due-diligence pack in under an hour. The same thing would have taken a team weeks. Checking and refining it carefully might take a week. So the audit-to-build ratio is roughly forty-to-one.

The work still compresses. What was weeks of team output becomes one hour of build and a week of careful audit. Even at 40:1, the speedup is real. The Auditor seat is what lets you take it. Without that seat you ship the slow version, because you cannot trust the fast one enough to send it.

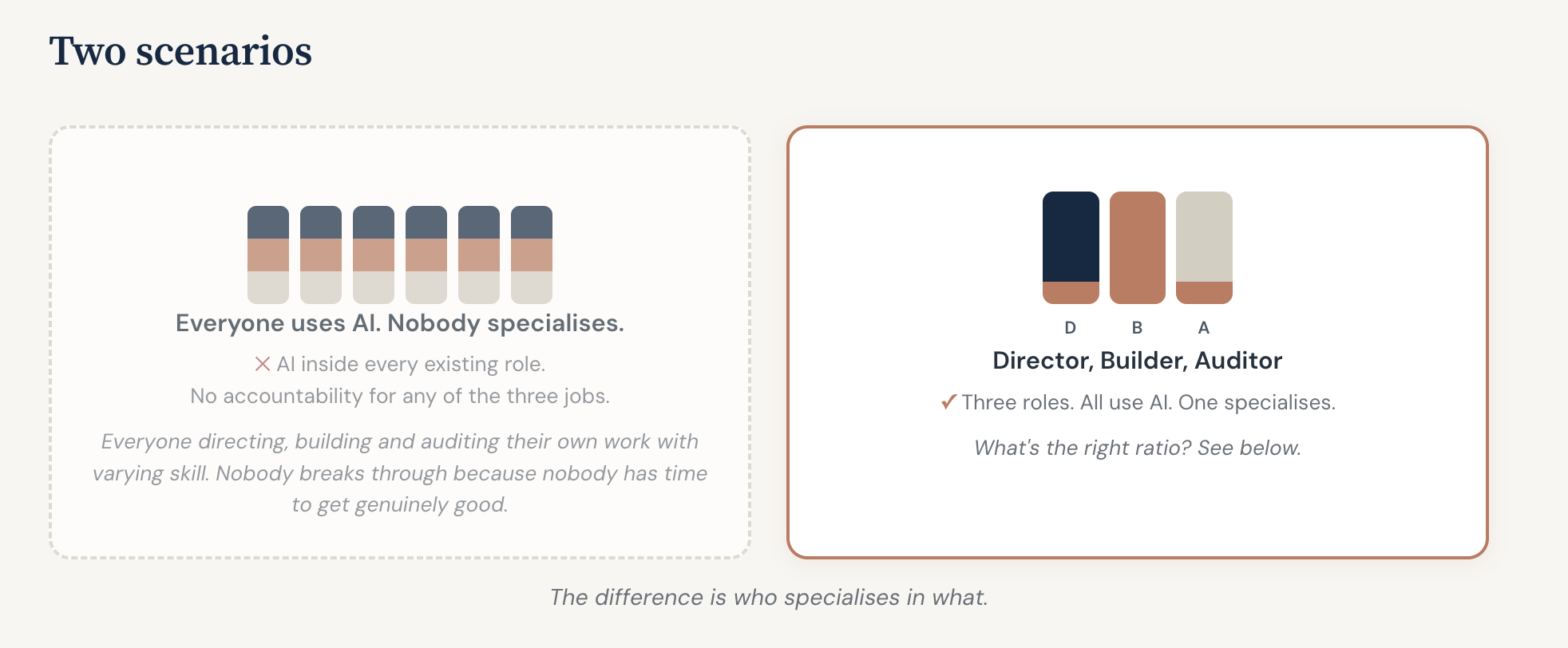

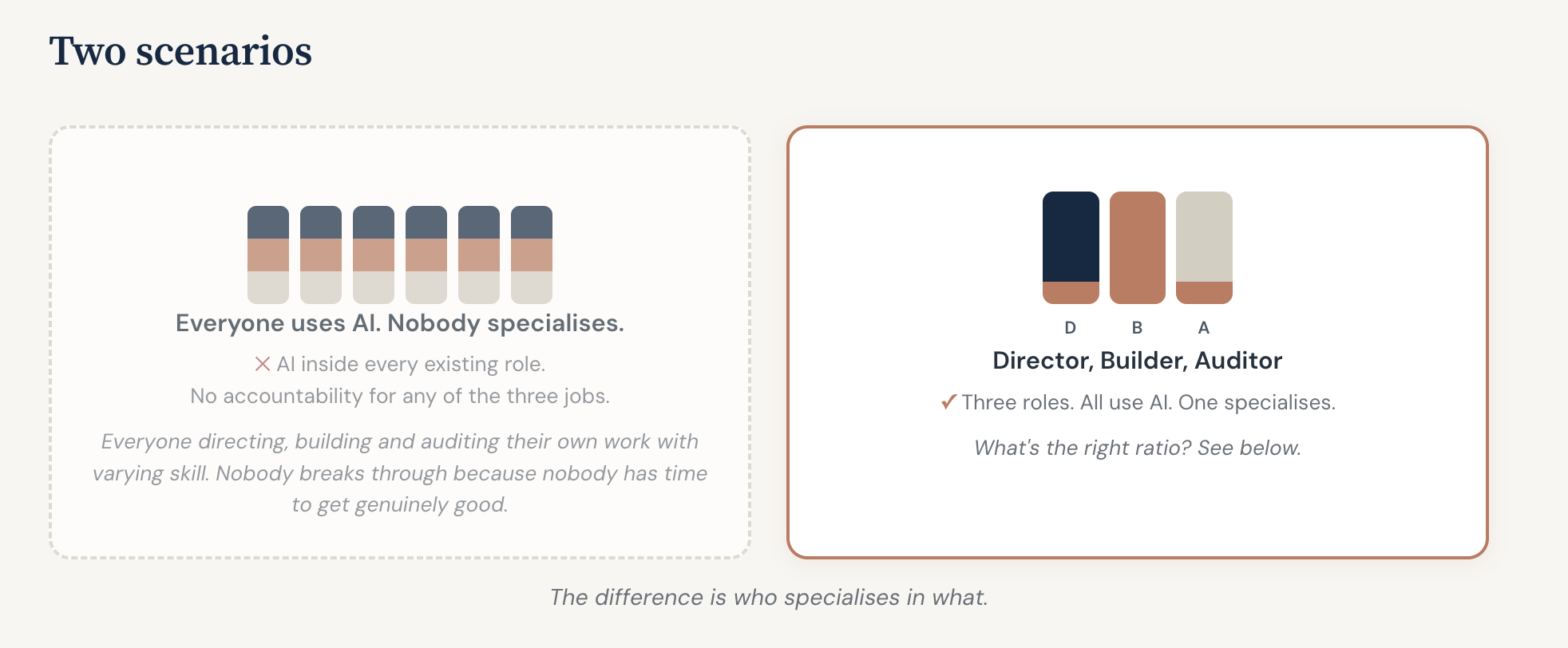

Three gaps open up. Each one points to a role.

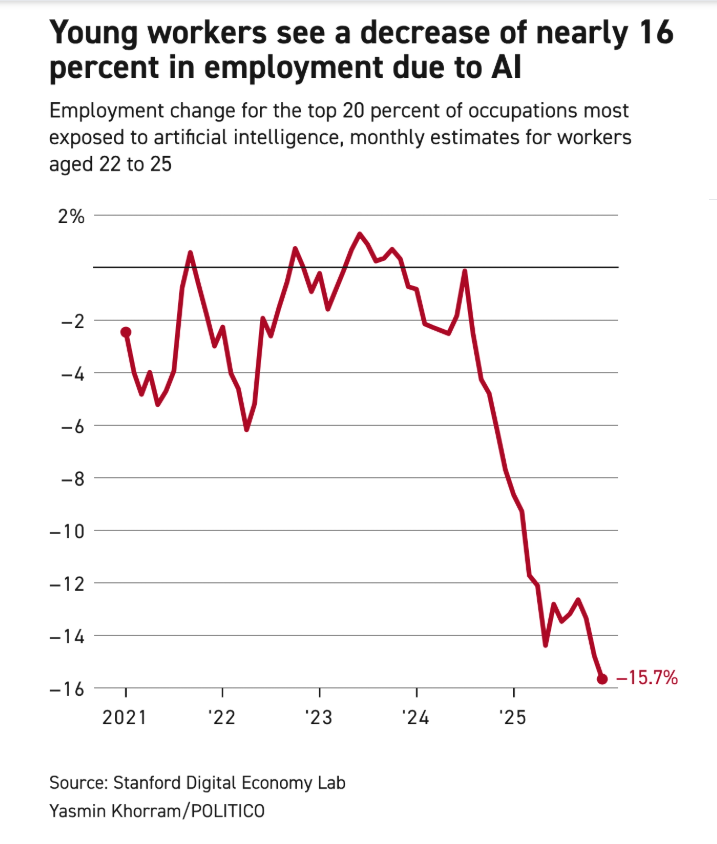

Directors are drowning. The people who frame the problem and sign off the answer are now reviewing more drafts more quickly than they can judge properly.

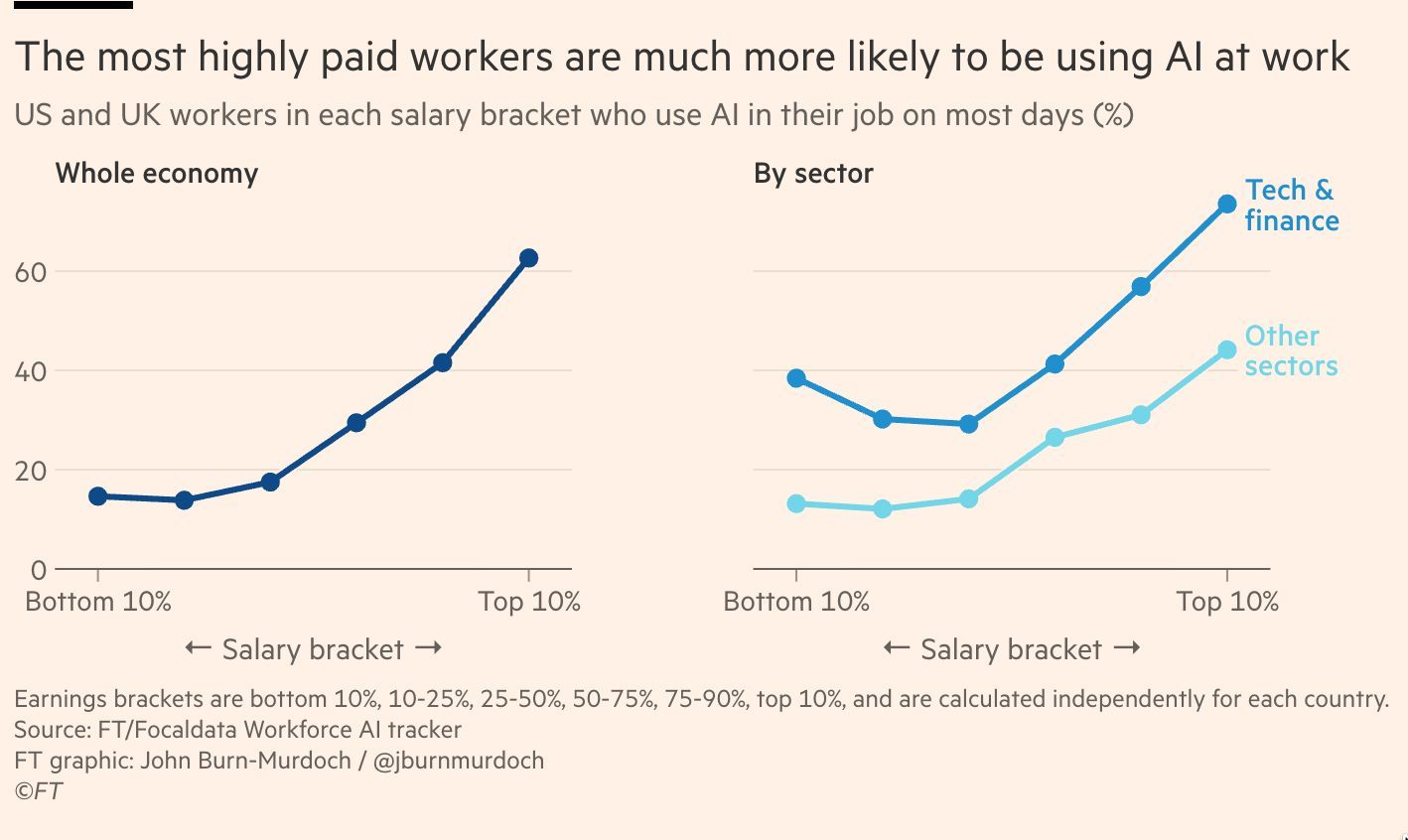

The frontier is a full-time job. The people doing the best AI work spend all day trying to keep up. Even the best will tell you they now can't. I can't. So most people cannot afford the time to be anywhere near the forefront, and it would be bad economics to ask them to. Senior and client-facing staff are worth more on judgement and relationships than on model selection. The frontier belongs to a specialist because specialisation is cheaper than spreading frontier fluency thinly.

The checking is broken. Senior staff burning expensive hours on verification. Builders pulled off the frontier to re-check their own work. Diligent-looking staff skimming mostly right output and missing the rare, occasional error. Assurance has been treated as a side-task. It is a craft.

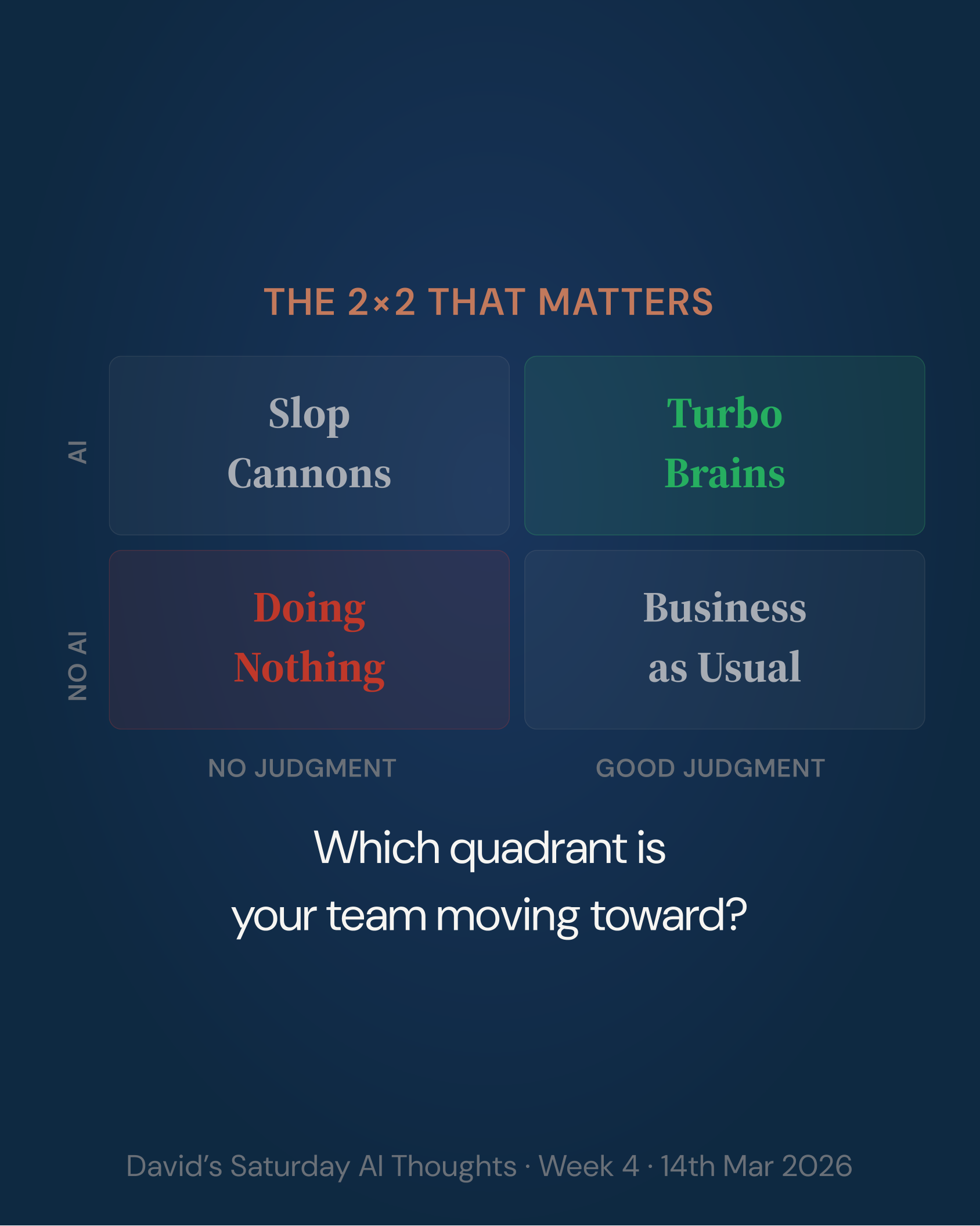

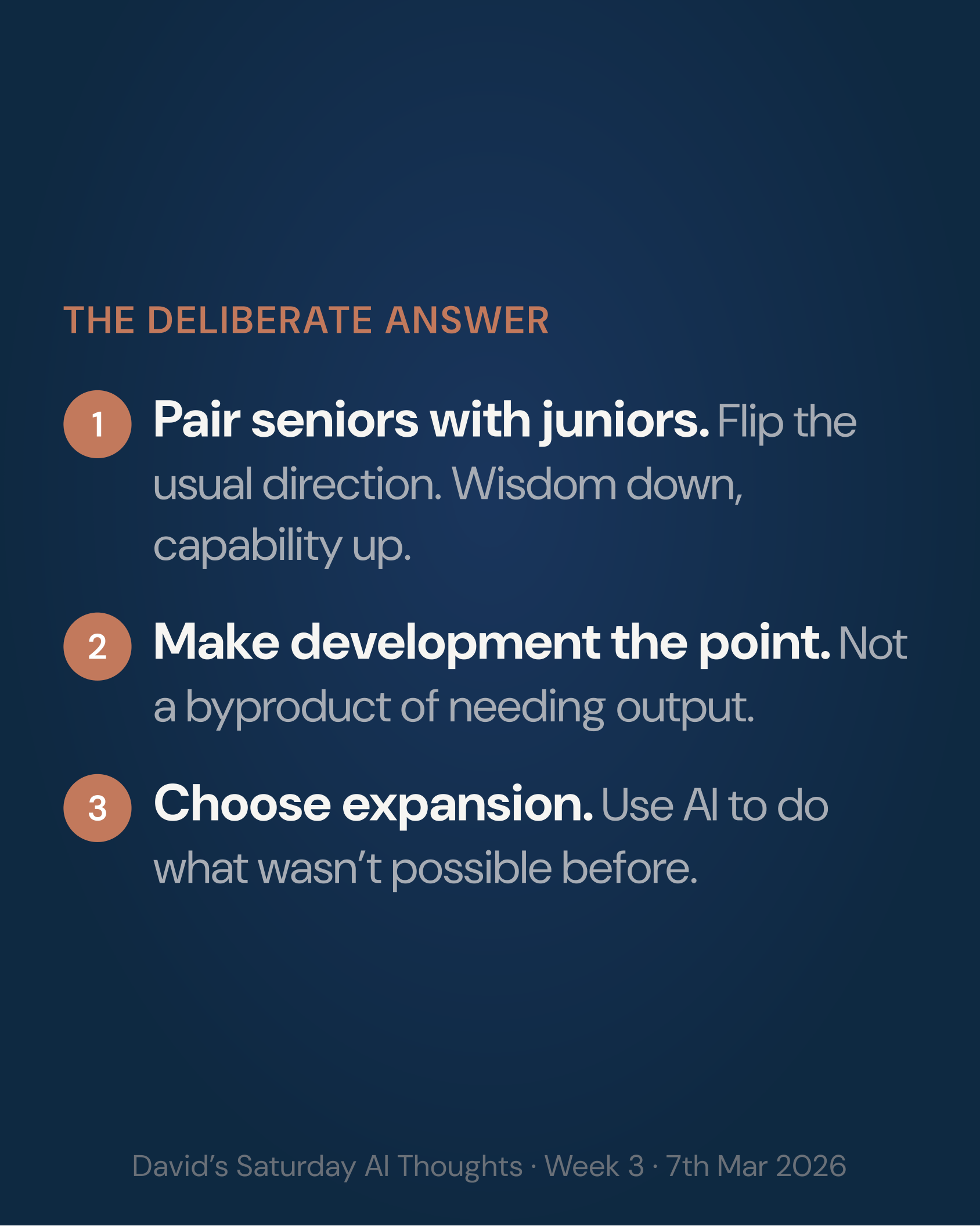

Three roles fall out.

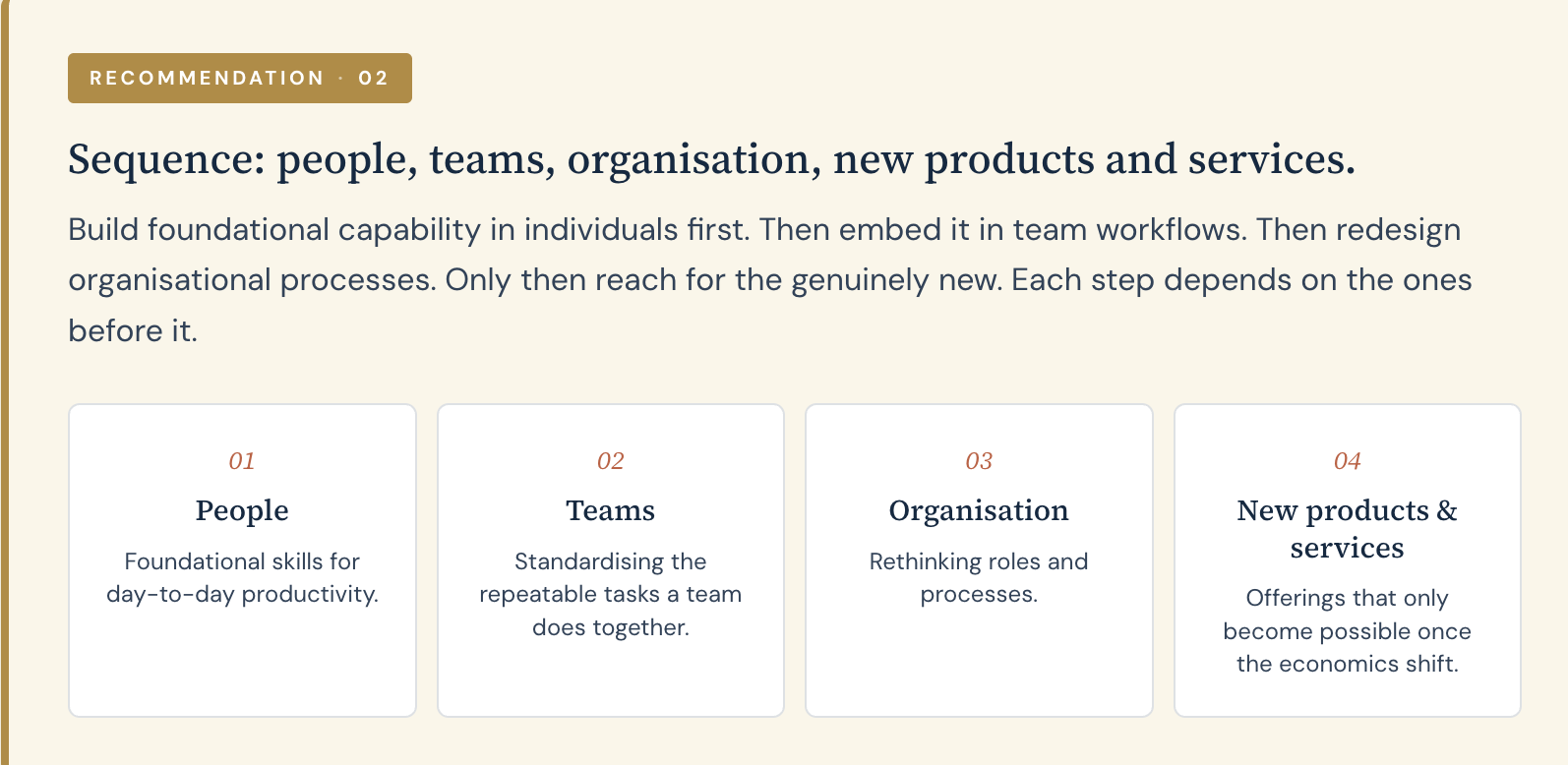

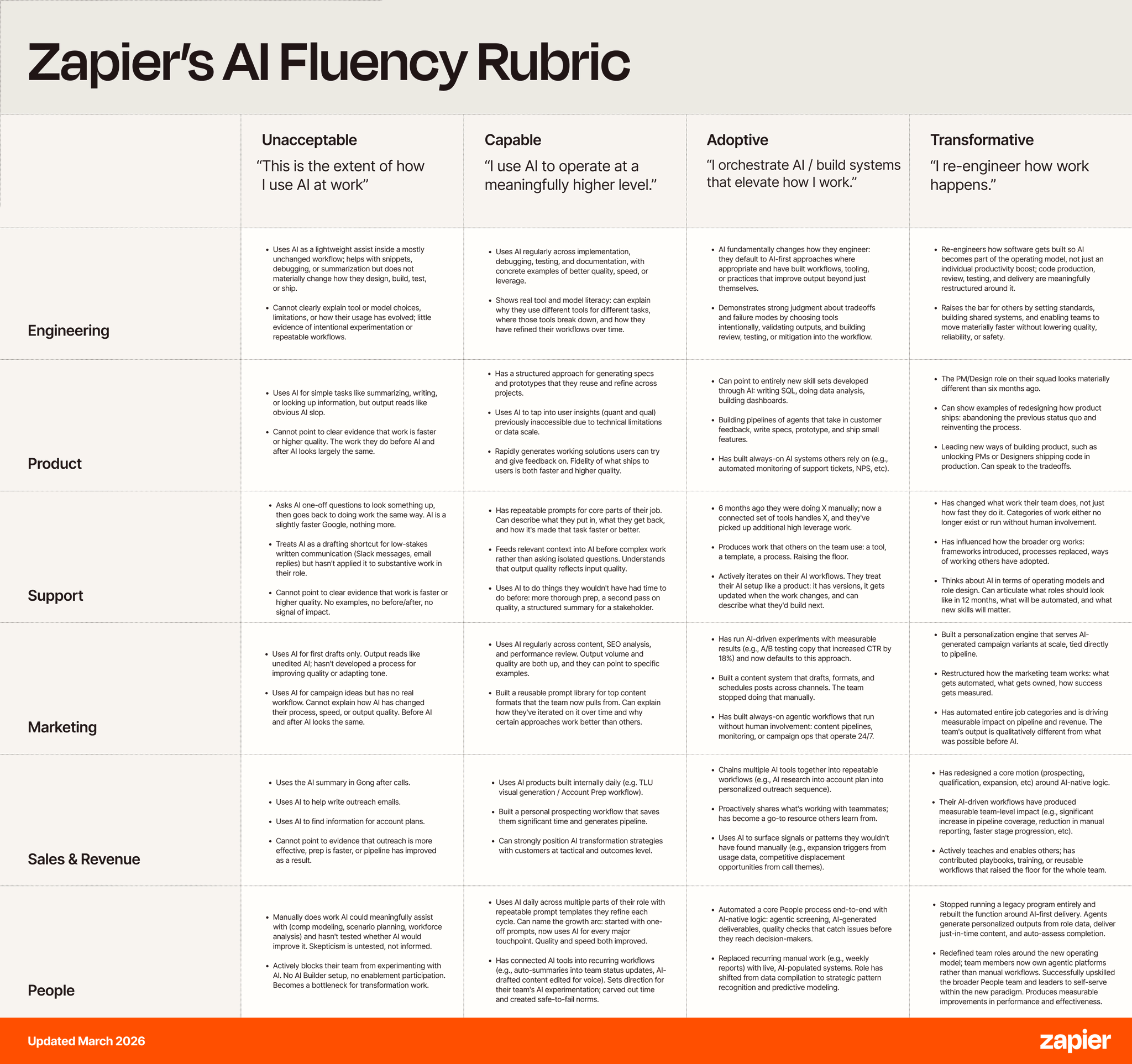

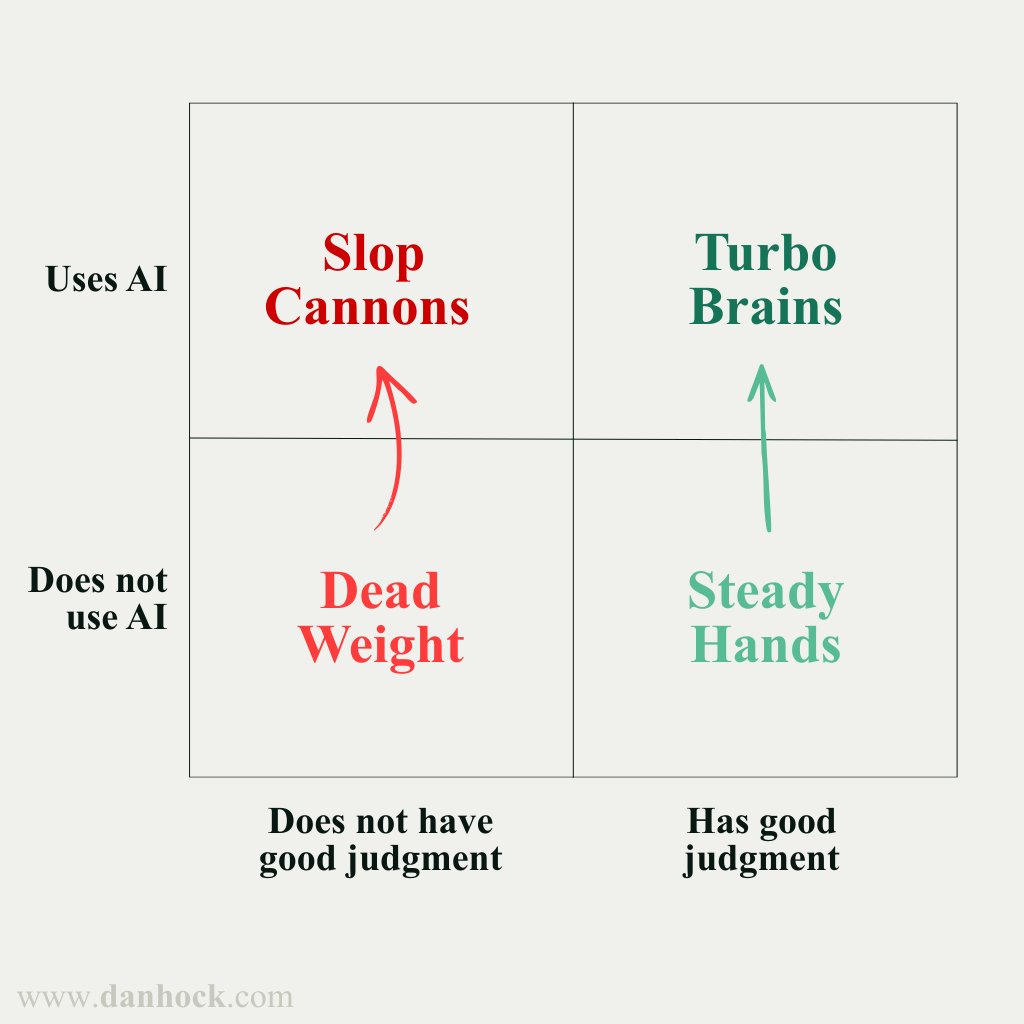

The Director frames the problem, decides what good looks like, owns the outcome. Uses AI every day and gets real value from it. Not an expert in the tools, and shouldn't try to be. The frontier moves too fast for someone whose calendar is full of relationships and decisions. Their job is taste and judgement, not keeping up.

The AI Builder is the specialist. Lives in AI all day, tries the new models and apps and features as they launch, runs many sessions in parallel, helps many people, ships at pace. The one seat where staying at the frontier is the job. Defined by appetite, not rank. Could equally be a mid-career specialist or a sharp new hire who has fallen in love with the tools.

The Auditor is a different breed. Traces citations to source. Runs key numbers independently. Stress-tests arguments cold in a second model. Replaces weak sources, swaps assumptions, reruns with corrected inputs, refines paragraphs that are nearly right. Auditing and fixing, not just checking. Uses AI every day and uses it well. The skill is judgement and accountability, not model selection. Usually an experienced generalist with a nose for nonsense. Not necessarily someone who has done the underlying work themselves, though.

Picture it. An Auditor reads an AI Builder's draft financial model. She traces ten citations to source. Nine check out. One is from a less-credible source, so she finds a stronger one and swaps it in. She reruns the affected calculation, updates the report's content. Signs off, or doesn't.

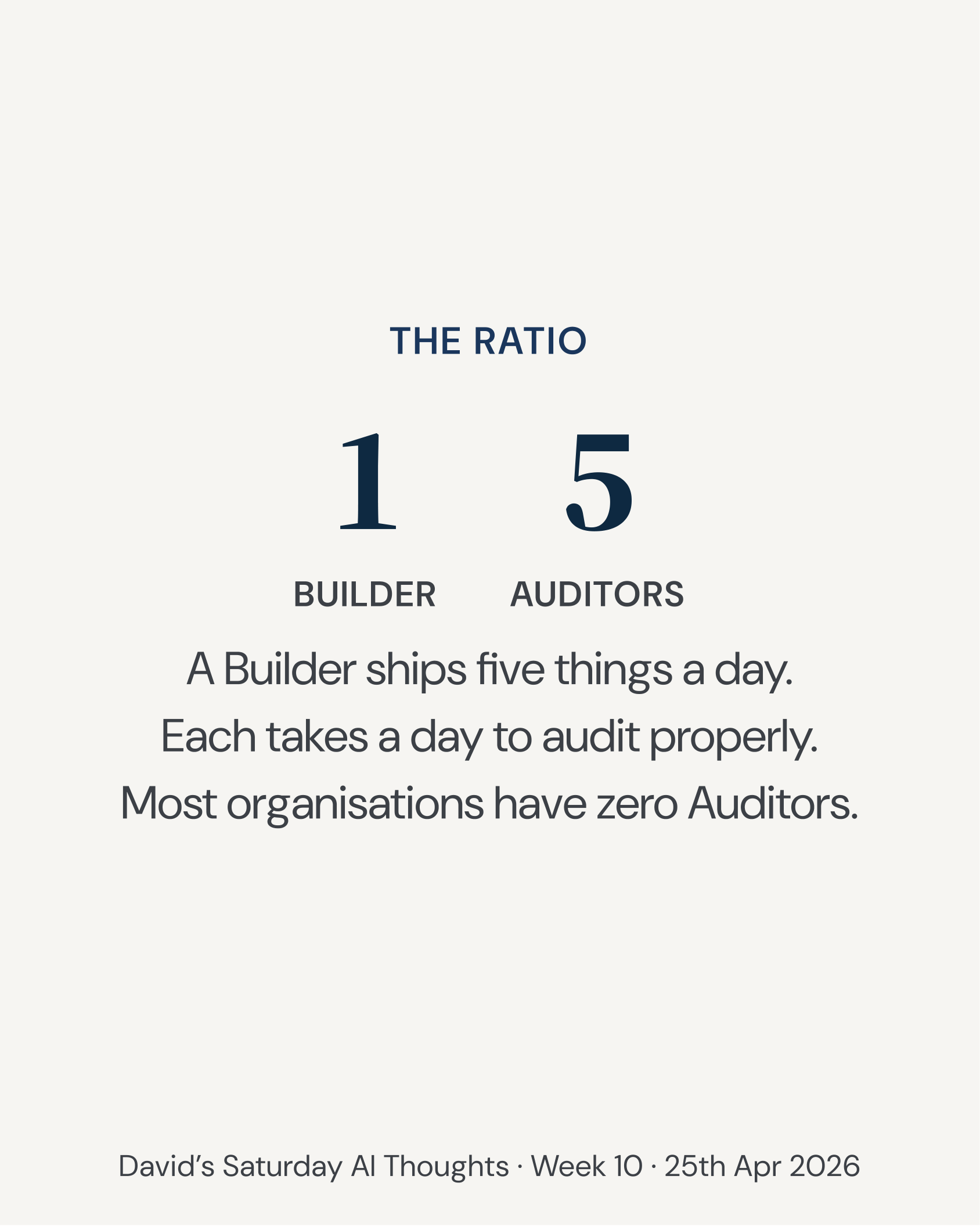

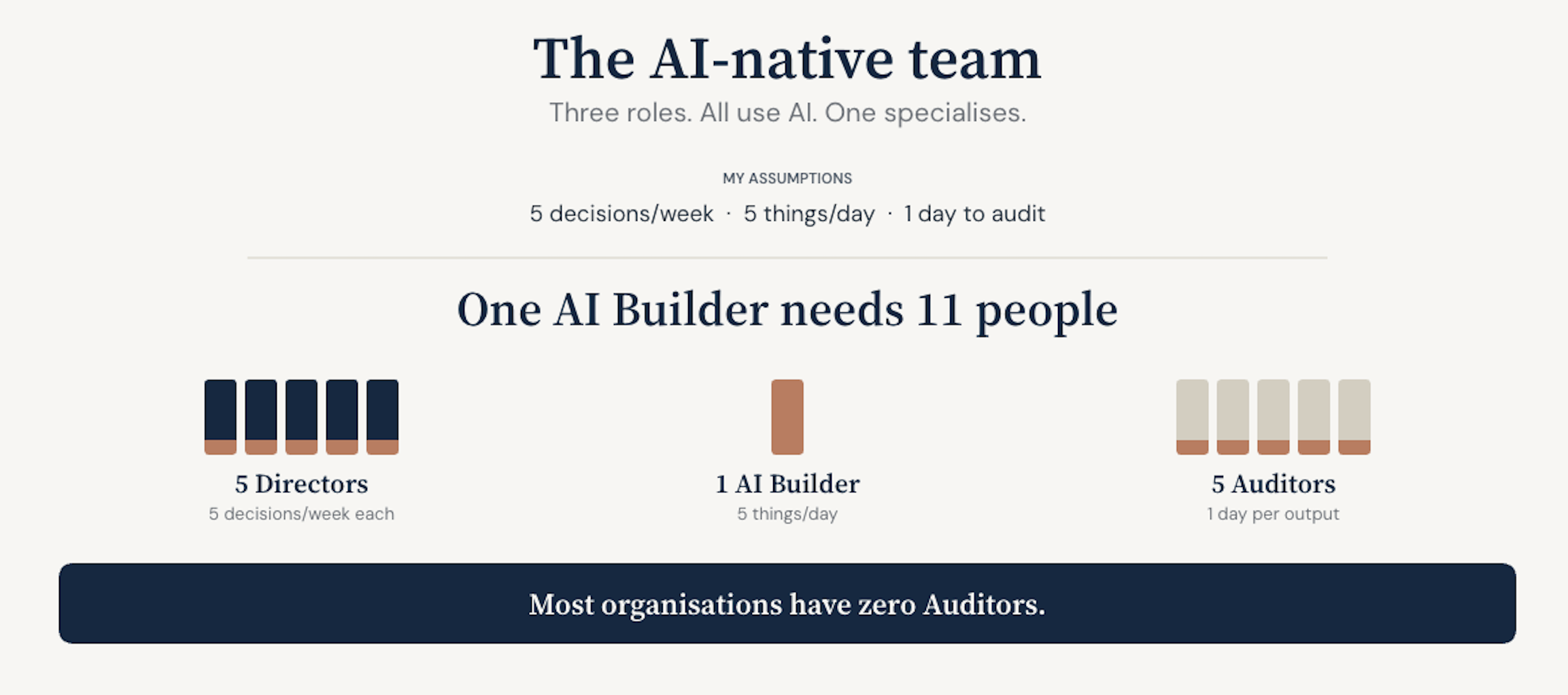

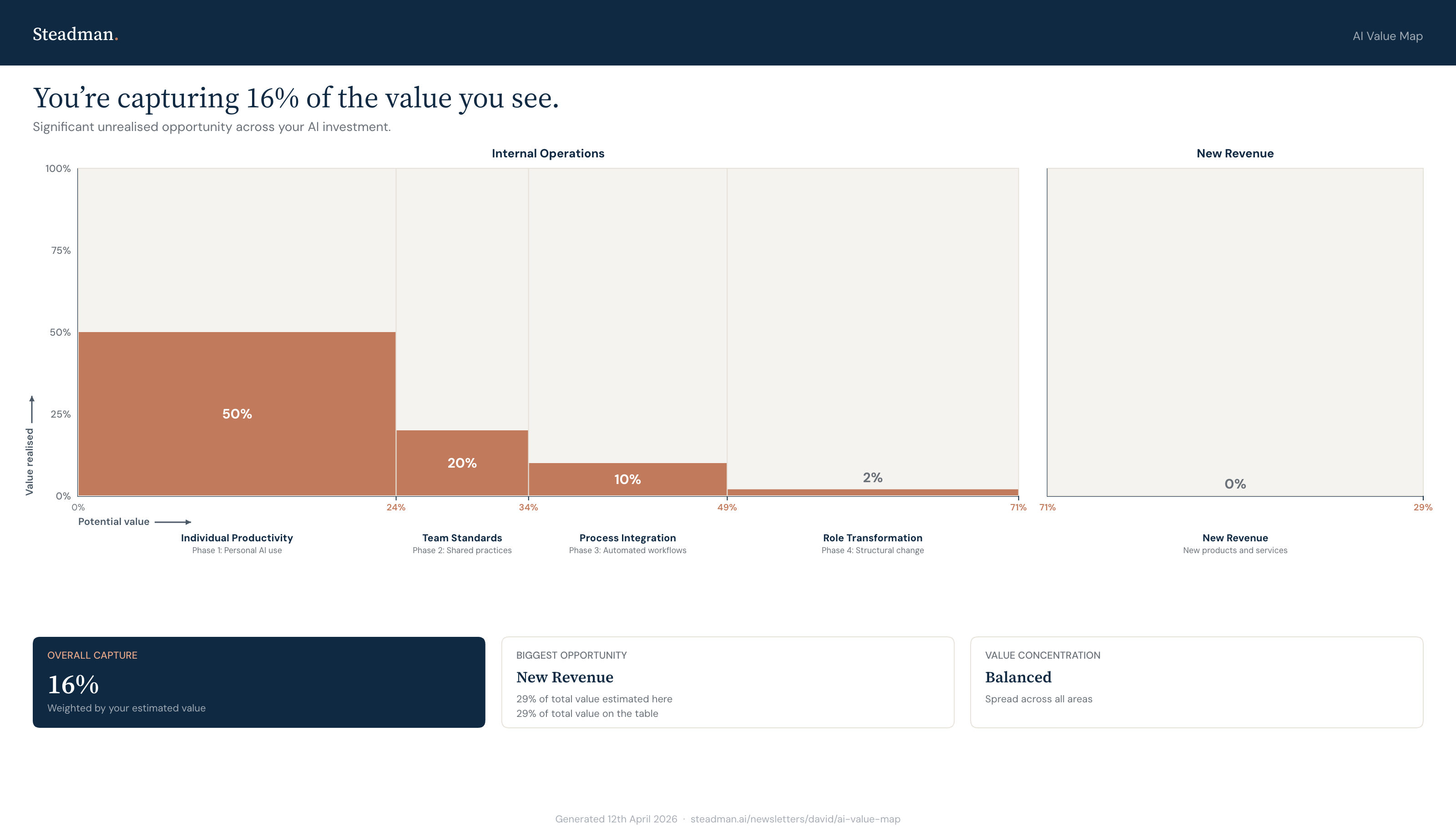

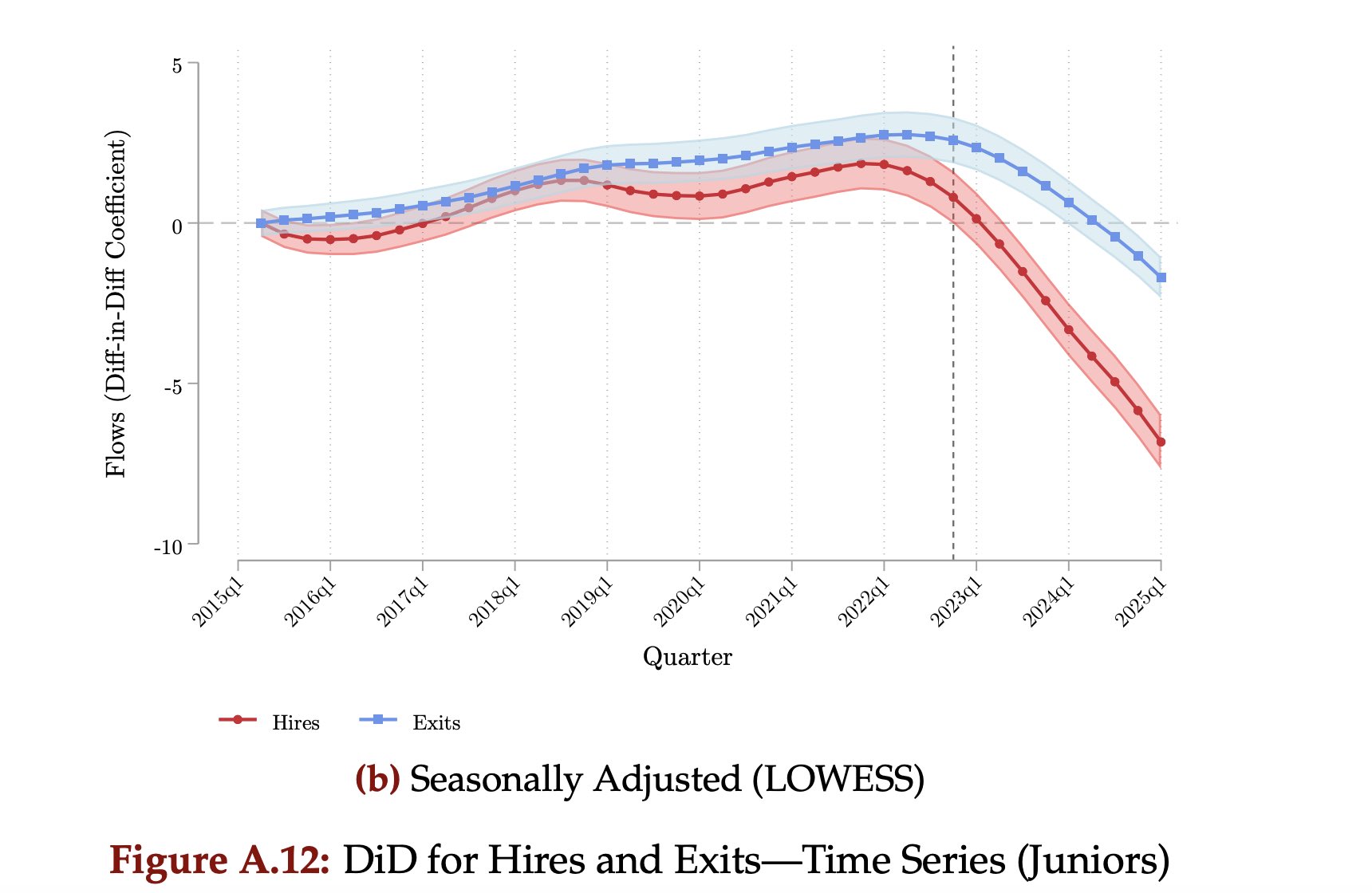

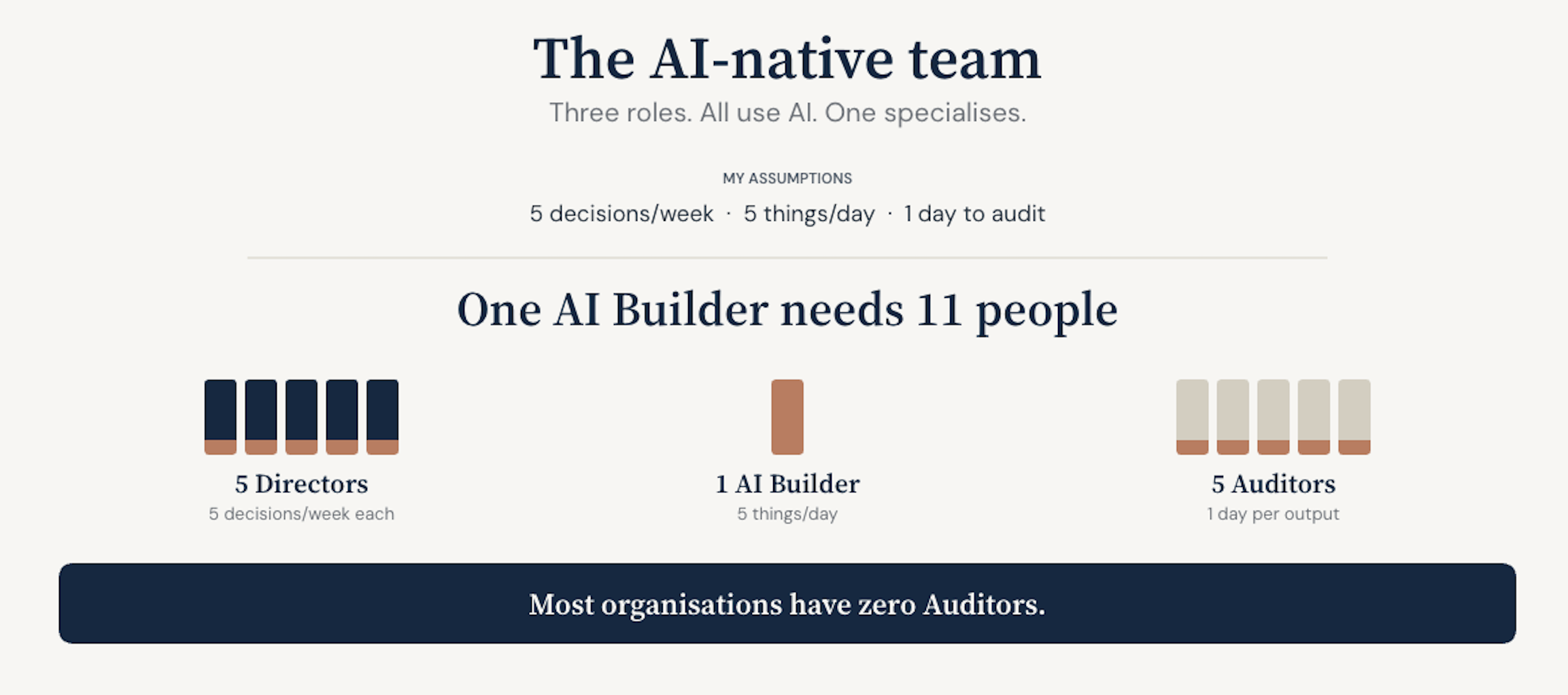

Start with the AI Builder, because the Builder is the scarce seat. Staying at the frontier is a full-time job, and an organisation can only afford so many full-time jobs spent there. Each Director needs roughly a fifth of a Builder's time to keep their decisions well-supplied with AI output. Each Builder ships five substantial things a day, and each takes a day to audit and refine properly. So each Builder needs five Auditors to keep their output shippable.

I built a quick interactive version at steadman.ai/auditors. Try your own assumptions.

I have been living the shortfall. A research project I did has been in my queue for eleven days waiting for me to review it. A financial model I cannot send until I check every number. A client deck that's been almost ready for a week. Every piece built cleanly. All mostly right. Much of it ready to ship. None of it has, because the audit queue is longer than the build queue. Weekends have become my quiet, focussed audit time. Every senior AI user I know has hit the same wall.

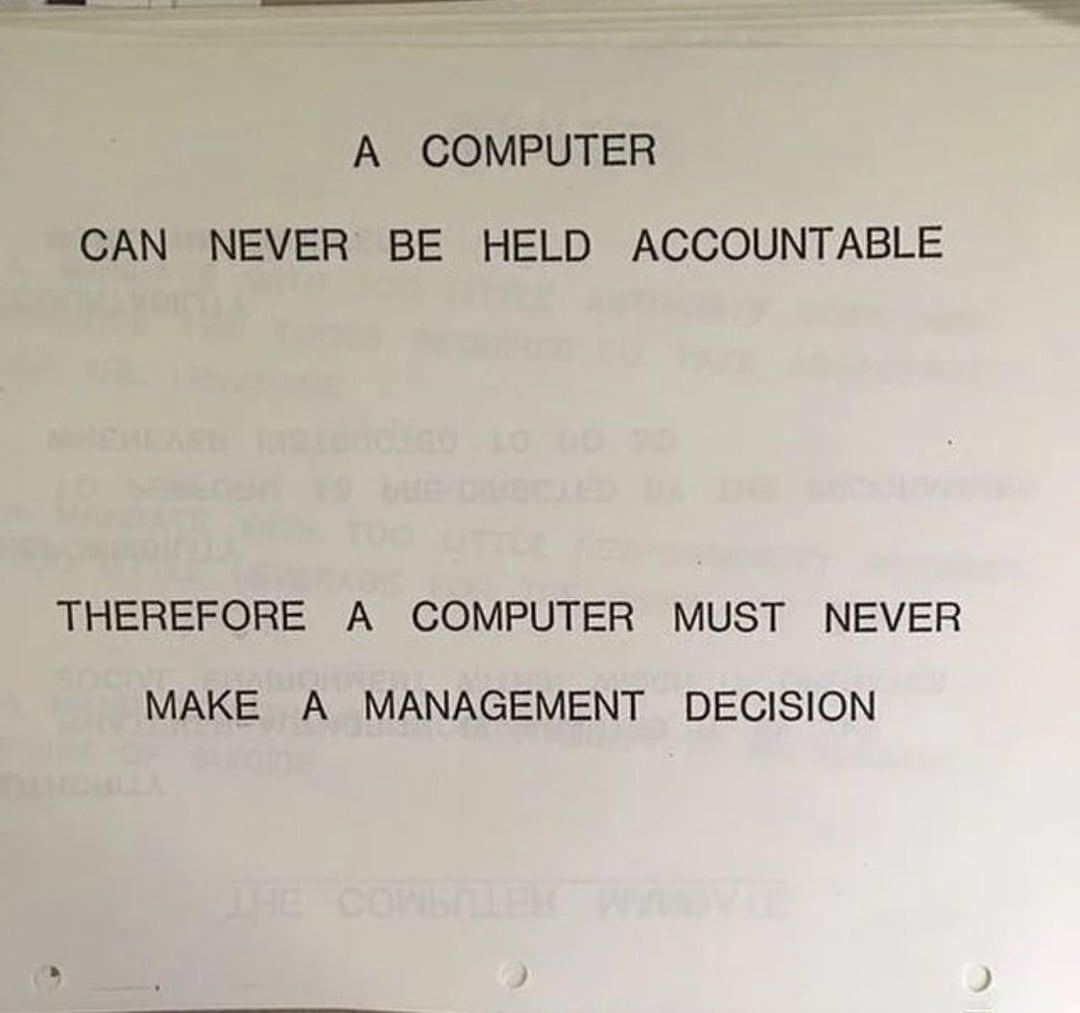

Why the Auditor has to be human is a separate question from why the seat exists.

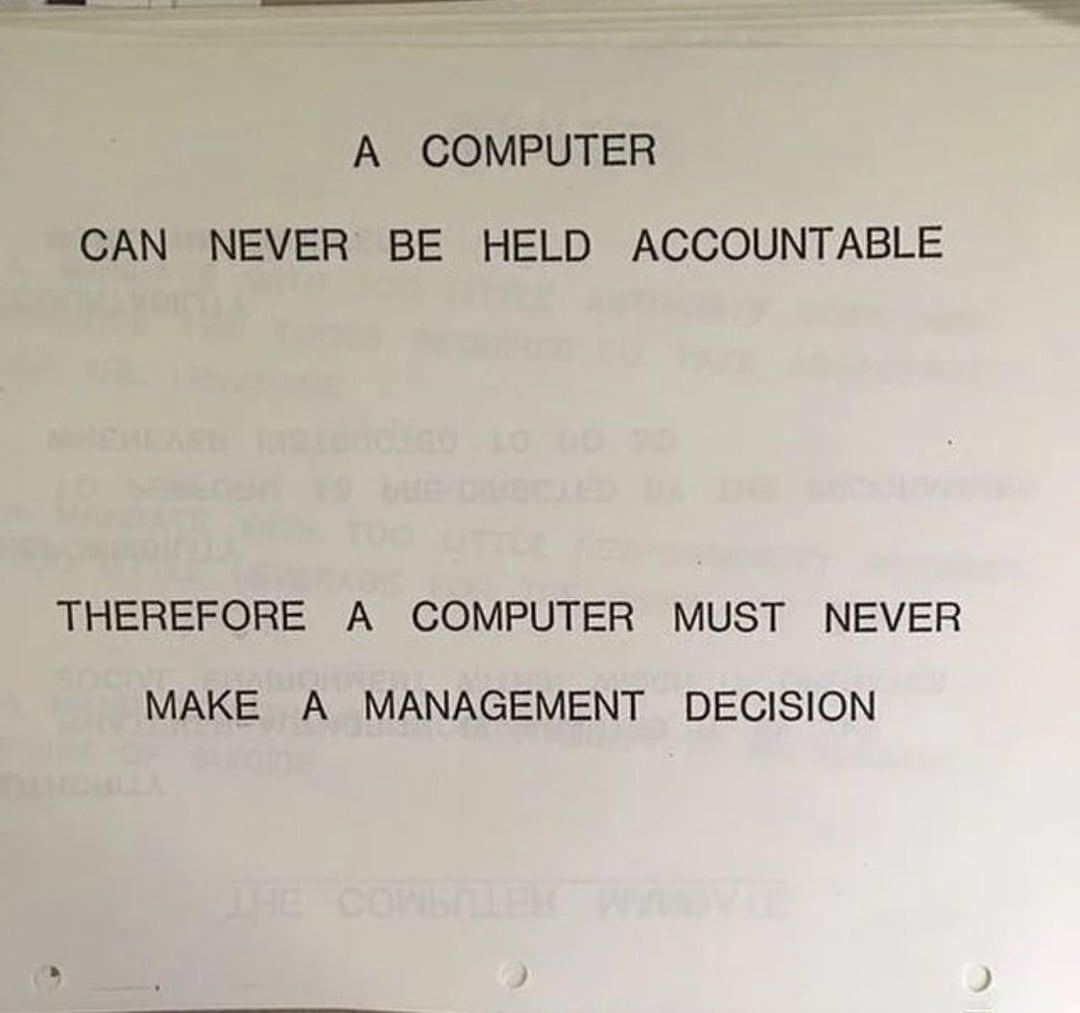

IBM trained its people on one line in 1979. A computer can never be held accountable, therefore a computer must never make a management decision. The line is more true now, not less. A machine can fail. Only a person can be accountable for the failure. The Auditor can run three models to check a fourth. The signature on the file has to belong to someone with a stake.

The word auditor carries twenty years of the wrong connotations. The old auditor checked your work, often after you thought it was done. A brake. A second-guess. Compliance, proofing, QA. A joke many liked to make.

The new auditor checks and improves the machine's work, on your behalf. Not the person standing between you and shipping. The person who lets you ship at all. The shift is whose work is being checked, and why. Same care. Different client. One holds you back. The other lets you move.

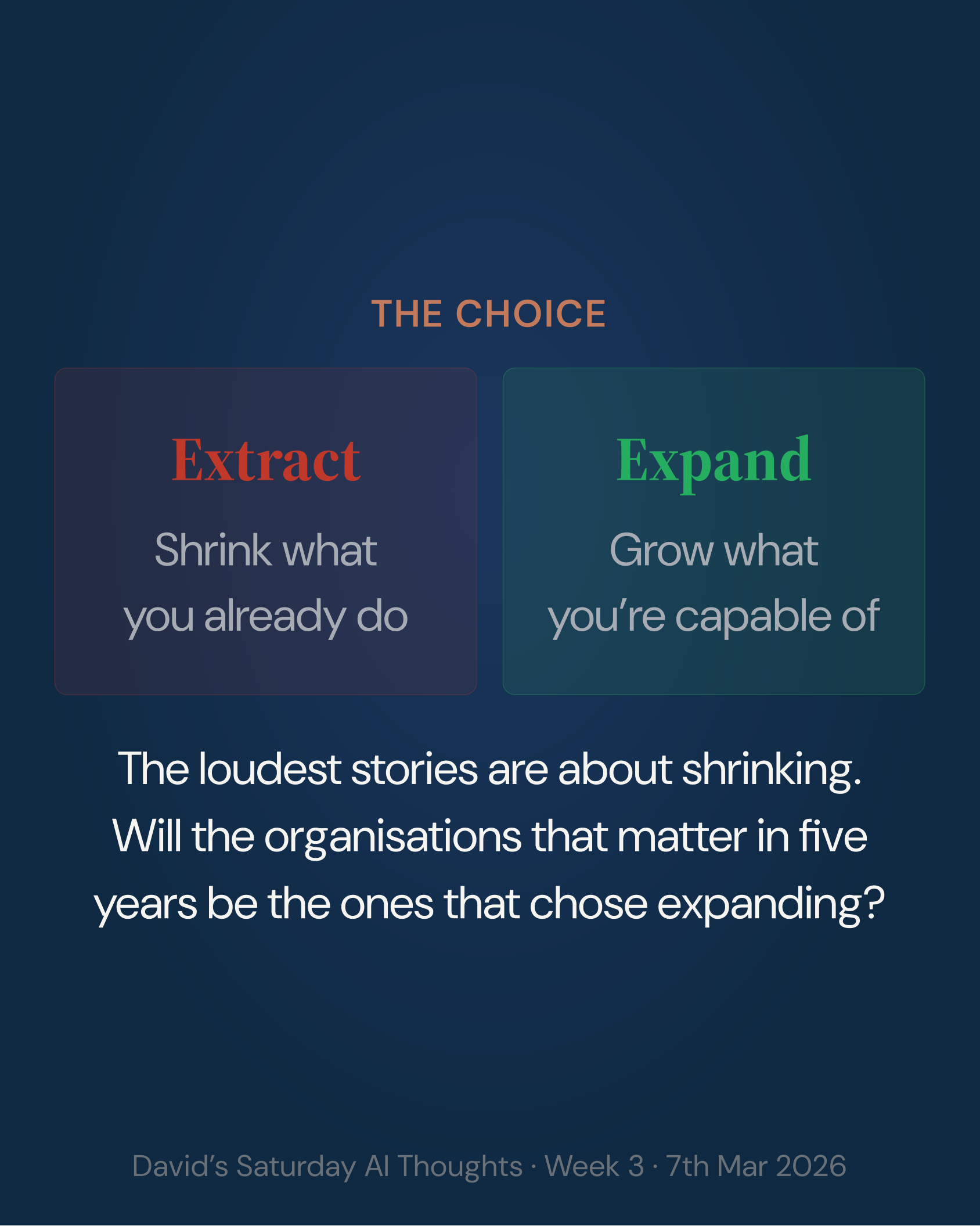

Software engineering has started reorganising around three roles with the weight on auditing. Knowledge work has not done the thinking here yet. The organisations that do it first will have the only AI-native teams that ship fast and ship right. The rest will ship and regret it.

Three things worth knowing

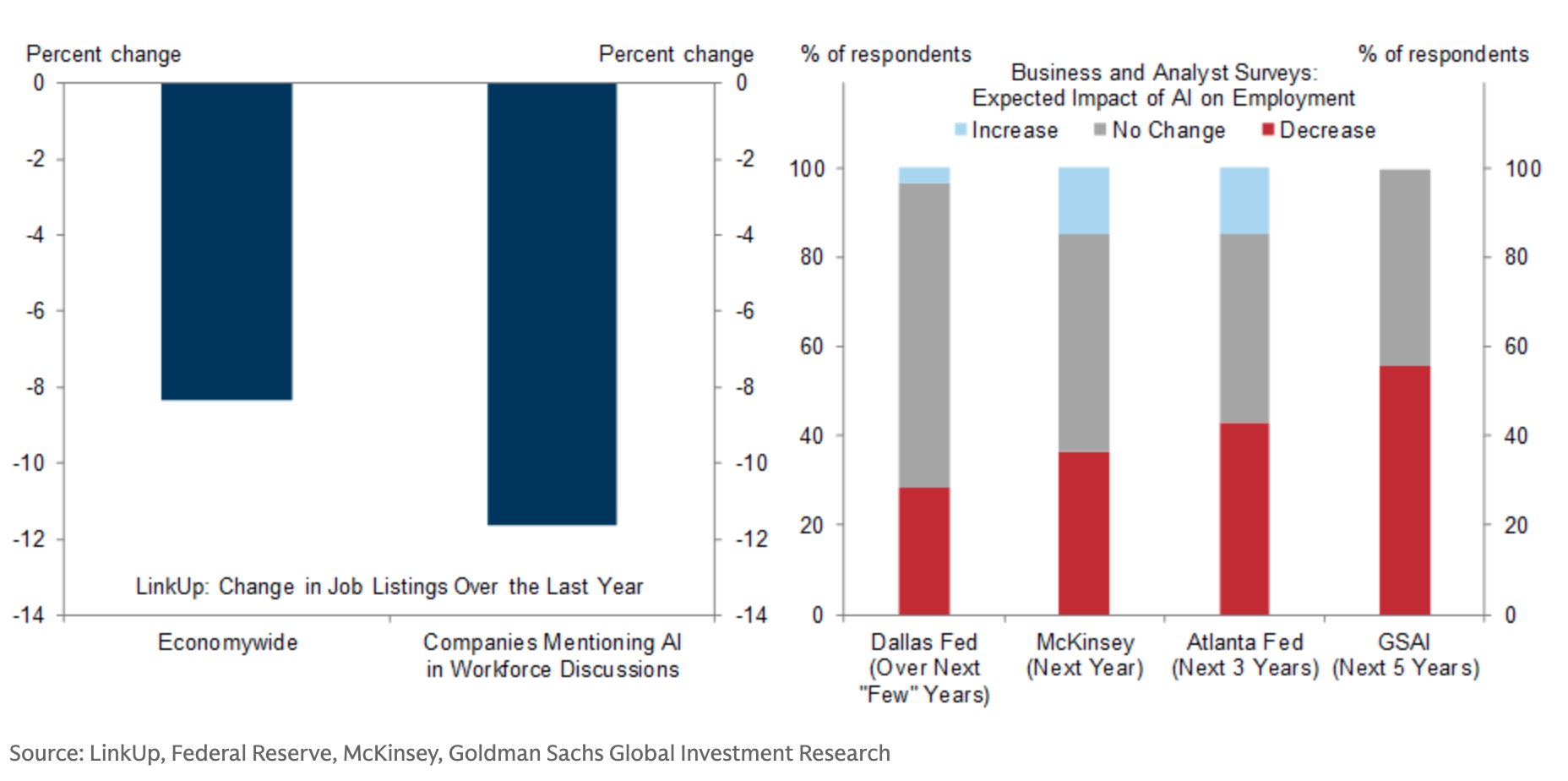

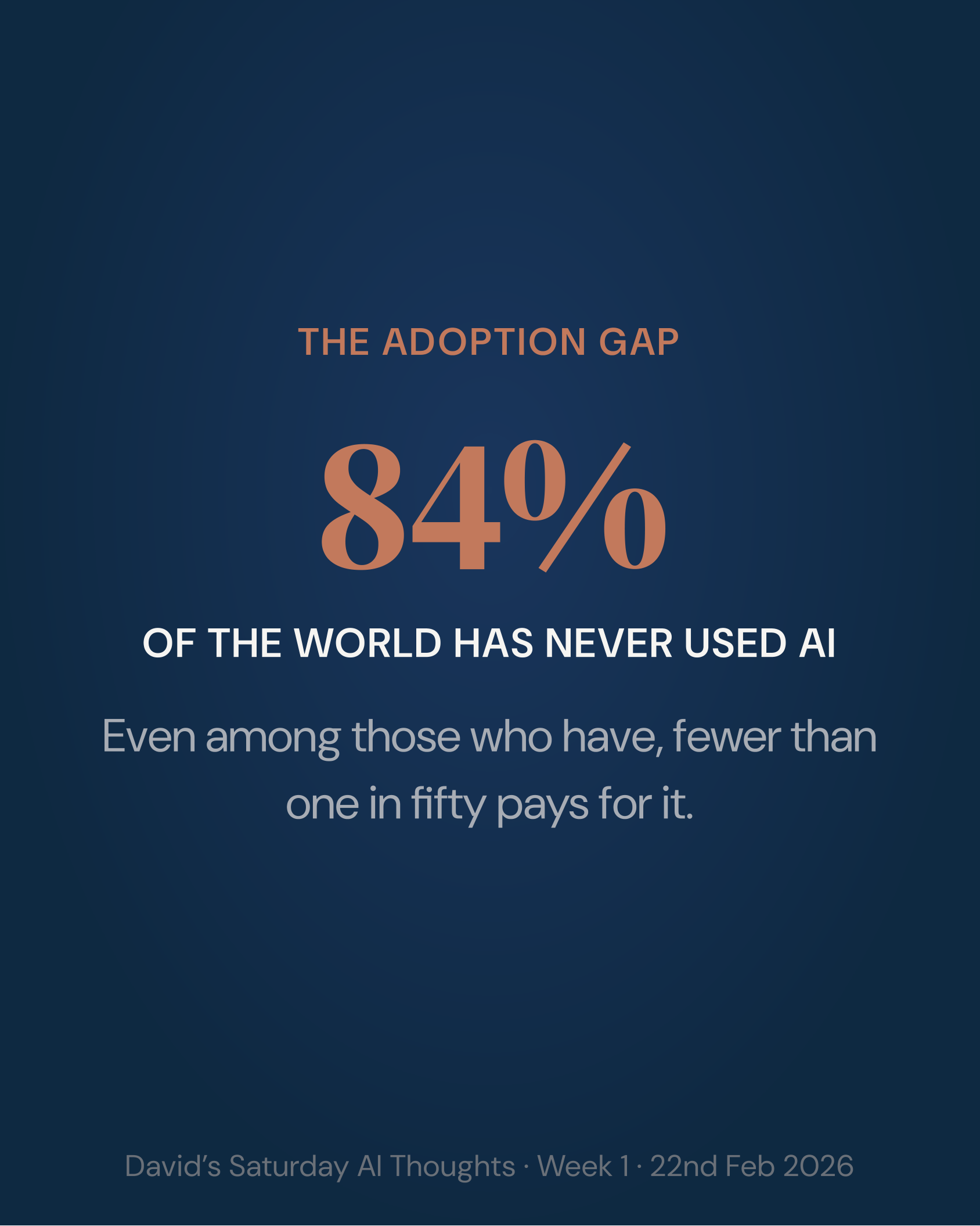

1. Fewer than 10% of organisations have scaled AI agents beyond pilots.

McKinsey data names the bottleneck: organisations won't hand over control. Agents require delegated decision rights that most companies withhold, pre-agreed accountability frameworks that don't exist, and cross-functional governance that nobody has built. Pilots succeed in contained environments and stall the moment agents intersect real workflows where incentives and reporting lines conflict. Matches my experience of most organisations. It takes hard, careful work to resolve these issues. Those that have done the work are getting the benefits.

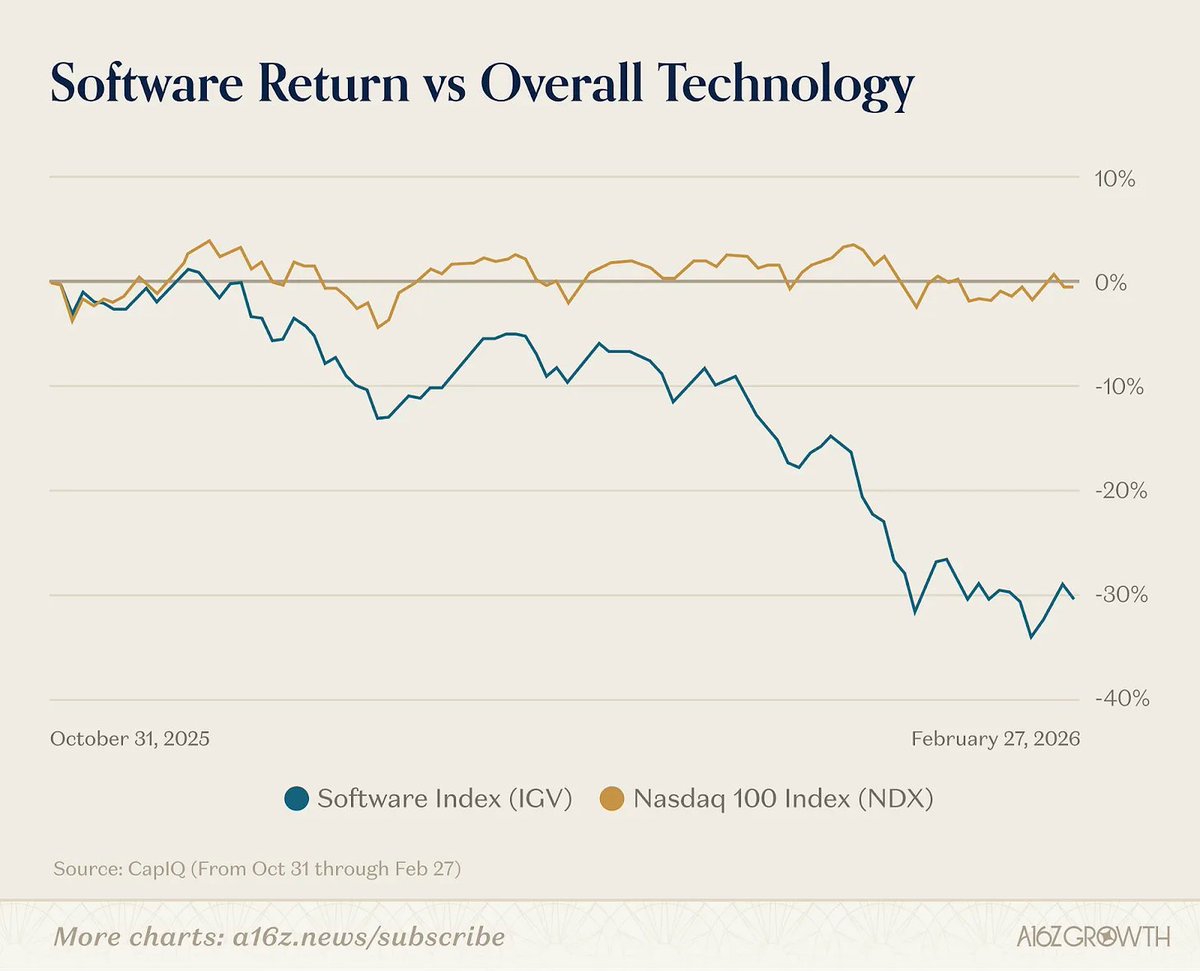

2. GitHub paused new Copilot signups. The flat-rate model broke.

GitHub paused new signups for Copilot's agentic plan after coding agents blew through the flat-rate compute allocation. Uber's CTO told journalists that AI coding tools have already consumed the company's entire 2026 AI budget. Goldman Sachs reports AI inference costs in engineering now approaching 10% of headcount cost, on a trajectory towards parity with salaries within several quarters. The pattern: evangelism, budget shock, rationalisation. The smart response isn't to slow down. It's to make sure the work being done is vallueable and to match the right model to the right task. Is flat-rate AI pricing over?

3. 29% of employees admit to sabotaging AI initiatives.

Writer's annual enterprise survey shows every organisational health metric worsened in 2026. Sabotage means what it sounds like: reverting to pre-AI workflows, deliberately not using assigned tools, discouraging colleagues from adopting, withholding inputs that would make AI systems work. "AI is tearing my company apart" rose from 42% to 54% of C-suite respondents. Employee confidence in their company's AI strategy dropped from 47% to 31%. Most C-suites concede their strategy is "more for show." The clearest counter-narrative yet to the adoption-is-accelerating consensus.

Eleven bits that didn't fit online →

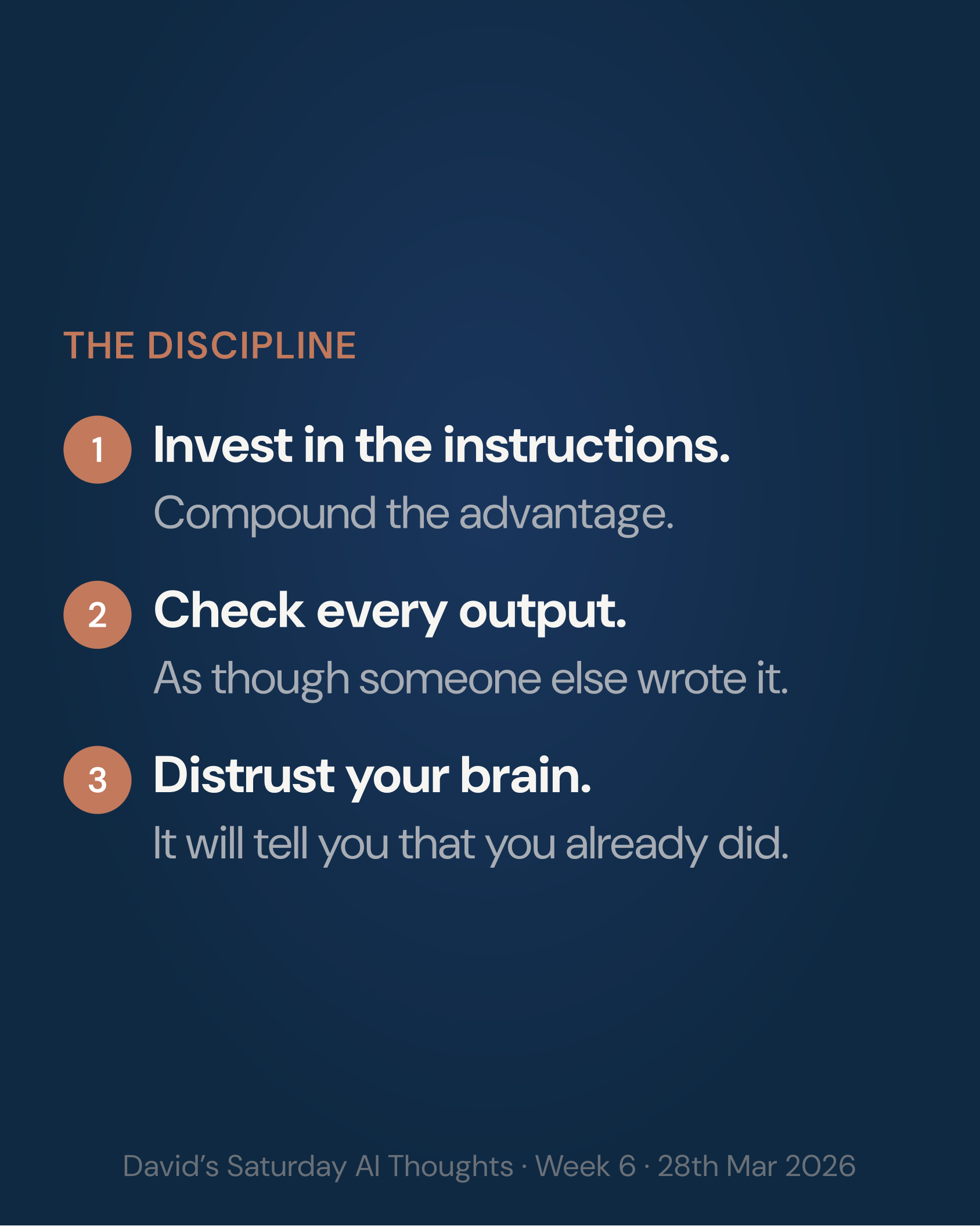

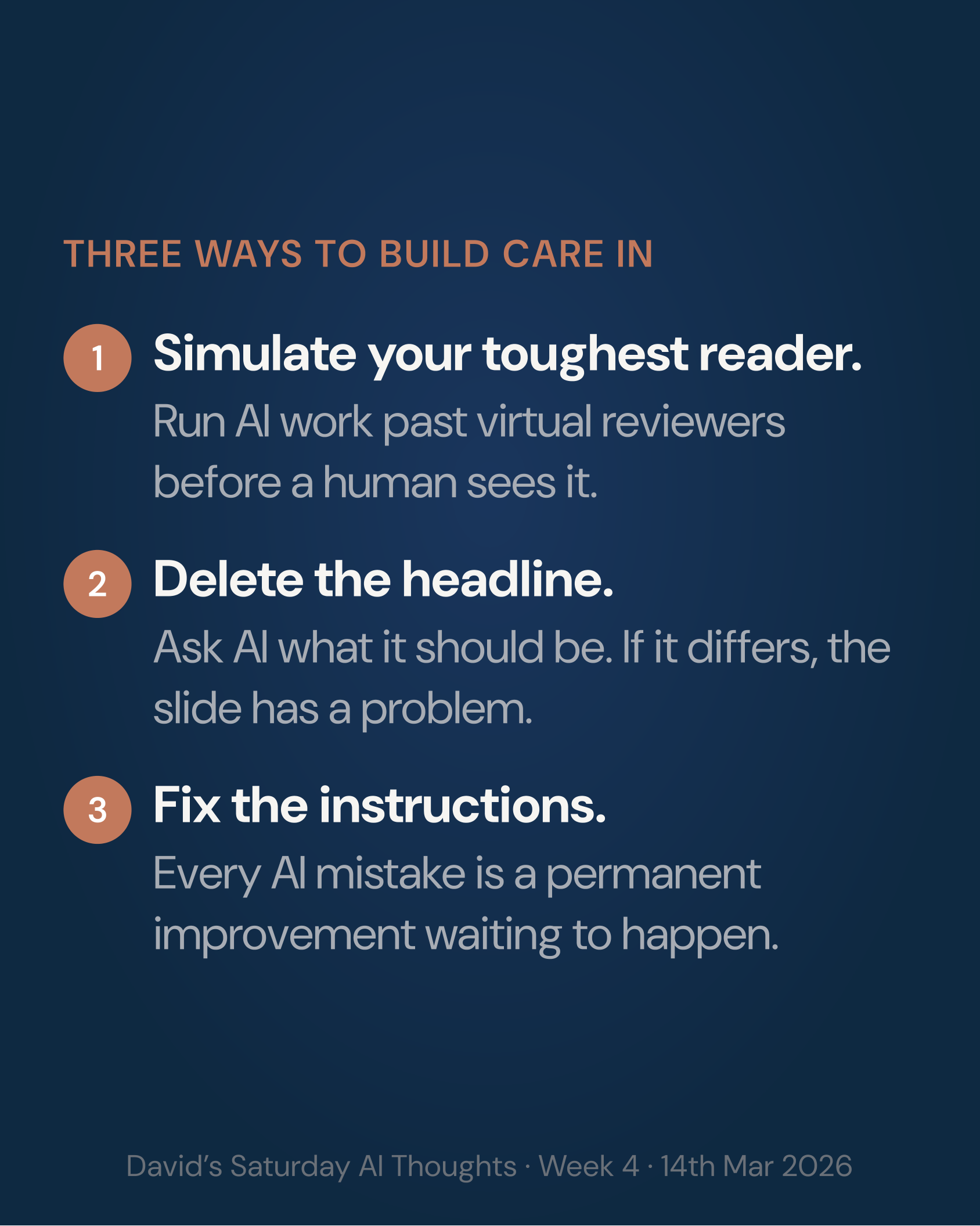

Try this

Ask "is this the simplest version?" before accepting AI output

Language models have no incentive to simplify. Work is free to them. Bryan Cantrill calls it the loss of "laziness": the human impulse to find the crisp abstraction rather than add another layer. When AI drafts something, check whether it found the simplest solution or the first solution. A three-step process wrapped in seven steps of hedging is worse than the three steps alone. The scarce resource now isn't production. It's restraint.

Audit cold

When checking AI output, open a new chat with a different model. Upload the source materials and nothing else. Don't verify inside the same conversation that generated the work. That conversation will defend its own output. I've tested this repeatedly over the last month: the same model that produced a confident answer will find the errors when it reads the sources fresh, without its own prior reasoning in the context window. Sullivan and Cromwell filed hallucinated citations in a multibillion-dollar bankruptcy case. This would have stopped it.

Save one reusable AI workflow this week

Google shipped Skills in Chrome this week: saved one-click AI prompt workflows that run on whatever page you're viewing. Pick a task you do weekly. Summarising a website, extracting action items from meeting notes, comparing options across open tabs. Save it as a named Skill. Whether you use Chrome, Claude, or something else, the principle is the same: if you've done it three times, encode it. Each saved workflow compounds. Each unsaved one gets reinvented from scratch.

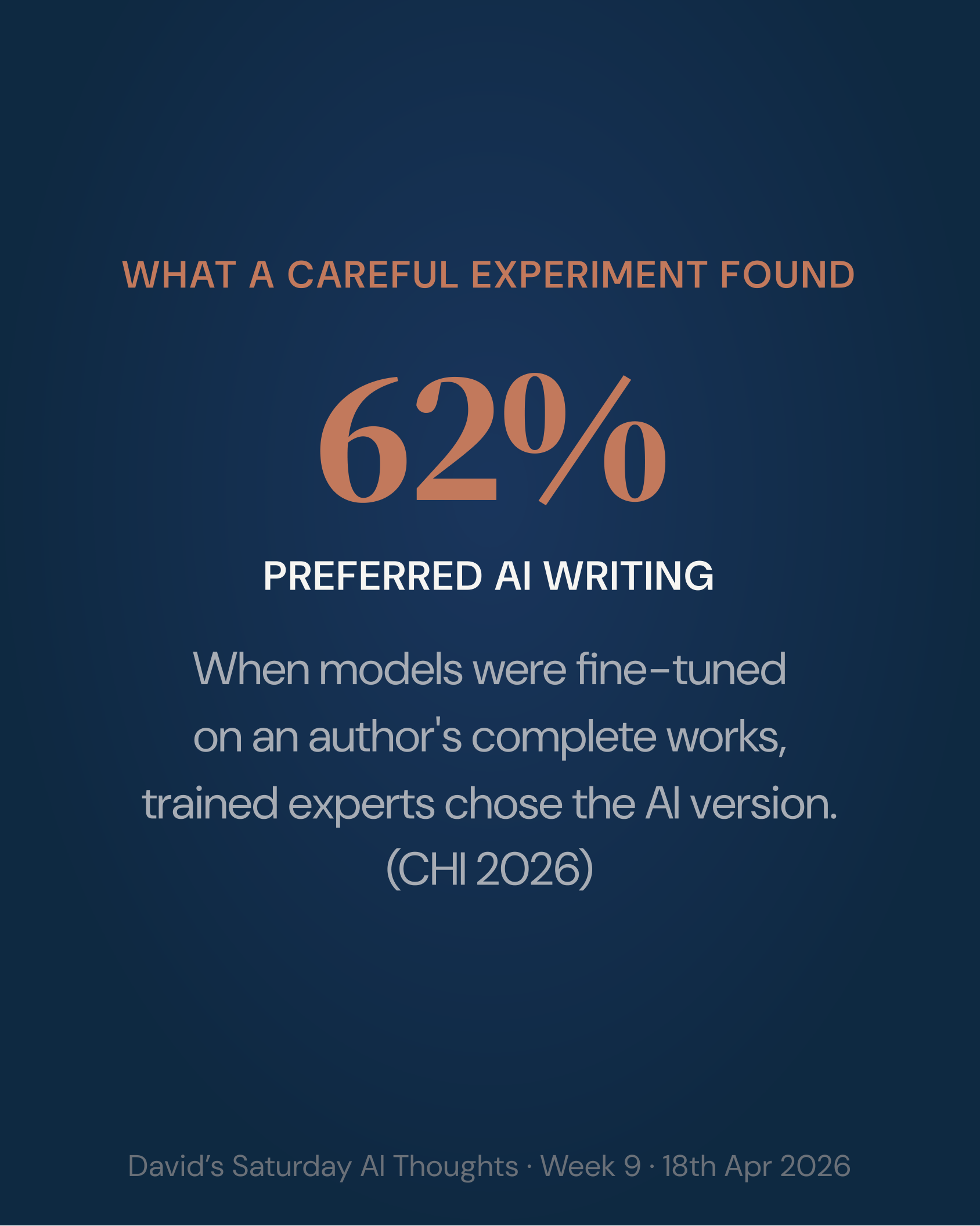

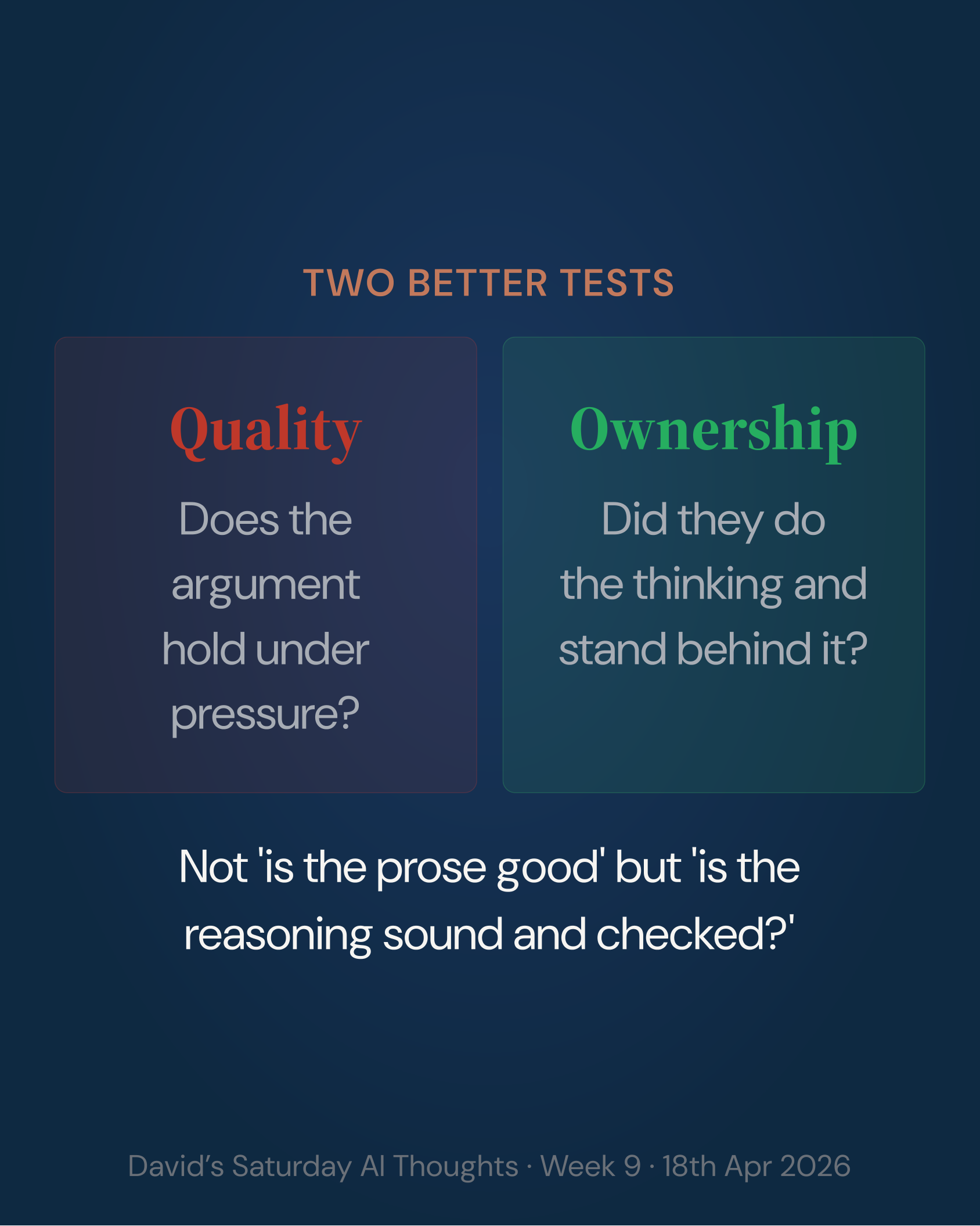

What readers said

Last week's "The proxy break" drew the strongest response yet. The essay about writing, identity and AI polish hit something personal. One reader described growing up treating correct English as a form of belonging, only to find AI stirring up the same anxieties about fitting in. They'd caught themselves search-and-replacing em dashes from their own writing to avoid being accused of using AI. Another faced a real dilemma: a respected consultant sent a clearly AI-generated proposal, and they couldn't work out how to say so. A professor offered the sharpest reframe: craft versus mass production. Temu at one end, Huntsman at the other. We probably need both, they said, but we need to proceed with care. Full reader reactions online →

A lighter week on LinkedIn from the community, but several posts cut to the heart of the essay. John Gleeson, who runs a customer success community and investment fund, met Marc Benioff this week and came away with one message: the unit of value in software is shifting from access to outcomes. "Service as software, not software as service." Nick Graham, founder of Vertemis, a research and analytics consultancy, frames the same shift for insights teams: stop shipping decks, start shipping decisions. And Dylan Jones, co-founder of Bold Square, a communications and marketing advisory, notes that Zuckerberg is building an AI agent to help him be CEO, but the real story is the internal culture of employees sharing tools they've built. "Your job as a leadership team is mostly not to get in the way." More online →